What are the options?

-

Probably the most obvious and quickest way to get started: use AWS, Google Compute Engine or some other cloud service. (Hopefully soon Fly, Render and other Elixir-centric hosts will offer GPU services too.)

-

Rent a dedicated server with a GPU.

-

Buy/build your own server and co-locate it.

-

Buy/build your own server and run it from your office/home.

Assumptions: Your app is already hosted somewhere and so this is primarily for your GPU related requirements.

What are the costs?

1 - AWS

| Elastic Graphics Accelerator Size | Graphics Memory | Price |

|---|---|---|

| eg1.2xlarge | 8 GiB | $0.400 |

| eg1.large | 2 GiB | $0.100 |

| eg1.medium | 1 GiB | $0.050 |

| eg1.xlarge | 4 GiB | $0.200 |

I’ve not used anything like AWS but I’ve read that it’s something like $3 per hour - not sure how accurate or well that translates to real-world usage.

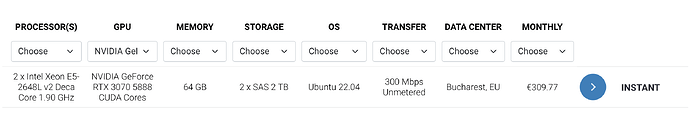

2 - Dedicated server with GPU

The costs seem to vary, but I’ve selected this one because it contains a decent amount of RAM and a GPU that you can easily buy yourself (for comparison with the next option). From serverroom.net (I’ve actually never heard of them before but they are one of the companies advertising when searching for ‘Dedicated server with GPU’:

There are cheaper alternatives available, but they usually start at at least $150 p/m.

3 - Build your own server and co-locate it

Server costs will depend on your spec (but you could go with what you can afford and then upgrade the GPU later). If you built a server with the equivalent spec as the dedicated server example above, I guess you’d recoup your costs within half a year or so (Geforce RTX 3079 is about £500 to buy). Co-location costs will vary too depending on where you’re located and what you need, but in the UK a 1U rack will cost about £25 a month (2U £40, 3U £113, 4U £135 - prices from servercolocation.uk).

4 - Build your own server and run it from your office/home

This is the most interesting to me because I imagine most people will be hosting their user-facing apps elsewhere (either some cloud service or a dedicated server) and you’ll only be using this server for computational tasks.

I reckon this could be a good option for when those tasks are not mission critical or not required in the quickest possible time (so for instance where you’re happy to tell your users you’ll email them when their stuff is ready - perfect for what I personally want to do!) The only costs here then are for the server, your usual internet connection and electricity, and if you need better contingency or speeds, you could get a leased-line (these start from around £100 a month in the UK depending on what you need and where you’re located).

Pros and Cons?

Can you think of any?

Here are some of my initial thoughts:

-

A cloud service will be the easiest and probably the cheapest for low-use. But will probably be the most expensive once you start getting into moderate to high use.

-

Dedicated servers (rented) will probably be ideal for those who are moderate to high use and are happy to manage the server/s but don’t want the hassle of co-locating or building their own datacenter.

-

Co-locating will probably be good for those with moderate to high-use, or those who want to save money in the long term (higher initial investment but you save a lot over time). For the largest users, they could build their own datacenter.

-

Office/home with or without a leased line will probably be good for those who want to experiment with the technology or are on a tighter budget. (For those who think this is a crazy idea, years ago a large number of companies hosted their own sites in-house with leased-lines and back-up generators.) Another advantage of this is that you can use the server to train your models from the comfort of your own office, perhaps being its primary use before being deployed.