Yeah even at my college I’ve not heard that definition either, I’ve always heard that ‘Concurrency is the ability to calculate multiple things at the same time without any necessitating a return, such as cooperative threading or coroutines’ and ‘Parallelism is performing work at the exact same time as other work’.

I have personally always viewed it that concurrency is about modelling and describing your system to express that things occur at the same time. Parallelism I have always seen to be what the underlying hardware/os actually gives you. So you can model your system in a very concurrent way (typical erlang/elixir) even if it only runs on a single core with no actual parallelism.

If that makes sense.

Interestingly, I have learned the same definition at the university as @NobbZ has. Of course, what ‘exact’ means is a bit vague (no pun intended) and open to interpretation.

Is running the same function, subroutine or program to be considered exact or not?

Looking at it etymologically, we have for concurrent:

Happening at the same time

whereas for parallel we have:

Having the same overall direction

which seems to support this.

In practice, though, I think that the most helpful way to think about it is that concurrency is about structure, whereas paralellism is about execution. In other words: Very much what @rvirding is saying, except that in his definition I would replace the world ‘occur’ with ‘are able to occur’:

[…] concurrency is about modelling and describing your system to express that things are able to occur at the same time. Parallelism I have always seen to be what the underlying hardware/os actually gives you. [In other words: how things actually end up getting executed].

That’s the way I’ve always thought of it.

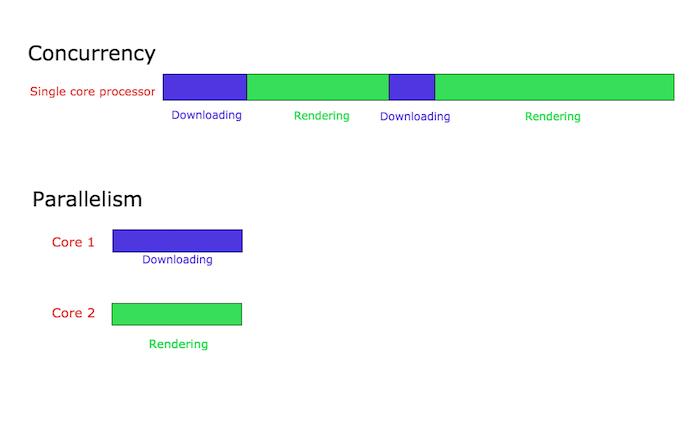

I’m extraordinarily visual, can’t really think at all without flashes of shapes and images throughout my head so I’ve always had an image like this in my head when thinking of concurrency and parallelism:

Concurrency is conceptually doing multiple things at the same time, but could actually be task switching constantly. Thus just because you have a concurrent system doesn’t mean you have a faster system (it doesn’t have to be parallel). Whereas parallel actually means doing two separate things at the same time.

From what I hear you all saying, it sounds like my intuition is correct.

Thus, bringing this back to my original question, when I learned python has the GIL for multiple threads I realized it wasn’t really parallel, but just concurrent, and wouldn’t speed up my tasks because my tasks weren’t IO bound.

@LegitStack That’s a great simple graphic of it yeah!

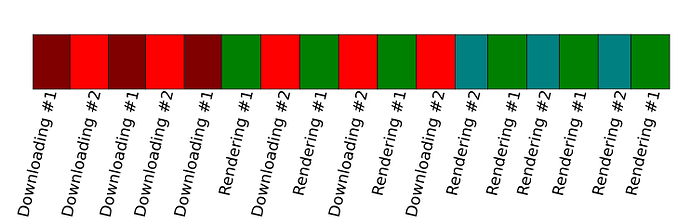

Nice drawing, though I would draw concurrency a bit more like this to show that tasks can be scheduled and switched.

EDIT:

@OvermindDL1 sorry, didn’t realize it had transparent background. And again sorry for bright background ![]() , I just took a screenshot.

, I just took a screenshot.

@vfsoraki Your image needs a background color or something (preferably not something bright)… ^.^;

Woo, much more viewable, though takes a couple seconds to bring to focus due to brightness. ^.^