It’s impressive that a bot can produce this kind of explanations but I feel like it’s kinda useless since you can never sort the good from the bad when you’re really ignorant of the answer…

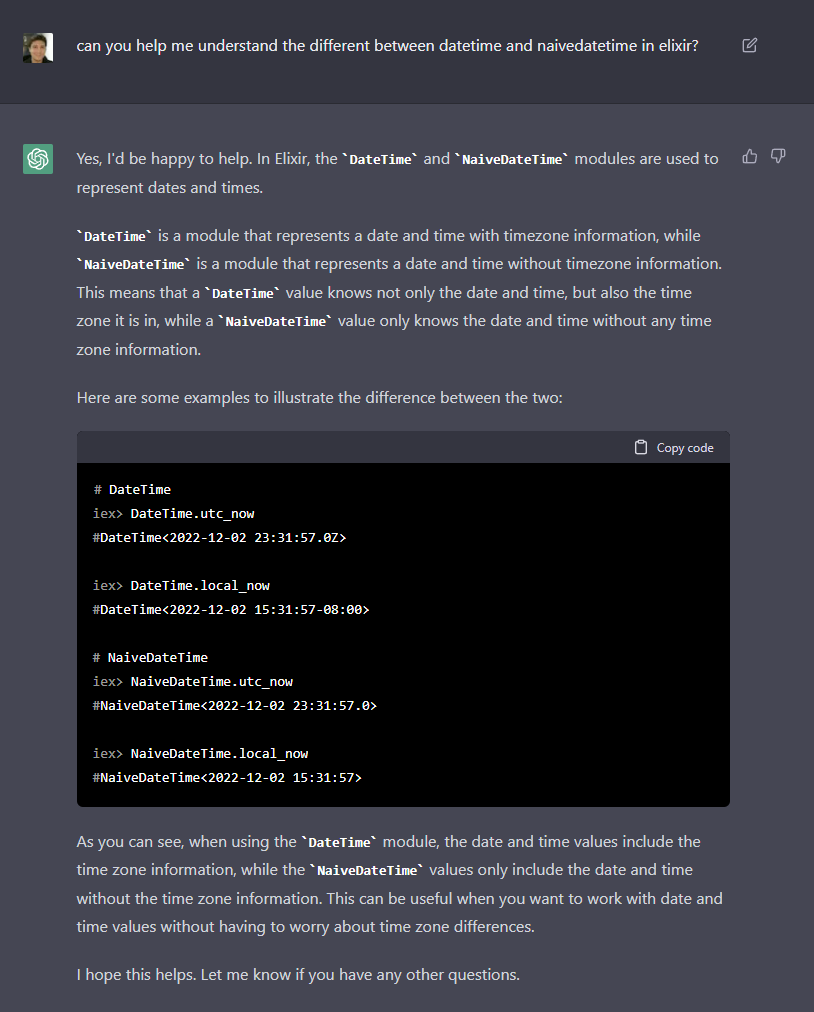

I asked it some general questions one might have on Elixir, e.g. “What is a GenServer ?”, “What is the difference between cast and call in GenServer ?”, “I just don’t understand how to share global state in Elixir. Can you help me with that ?” and its answers were surprisingly good and accurate (some kind of rewording of the official docs with the KV store example).

It can even understand questions and answer in French

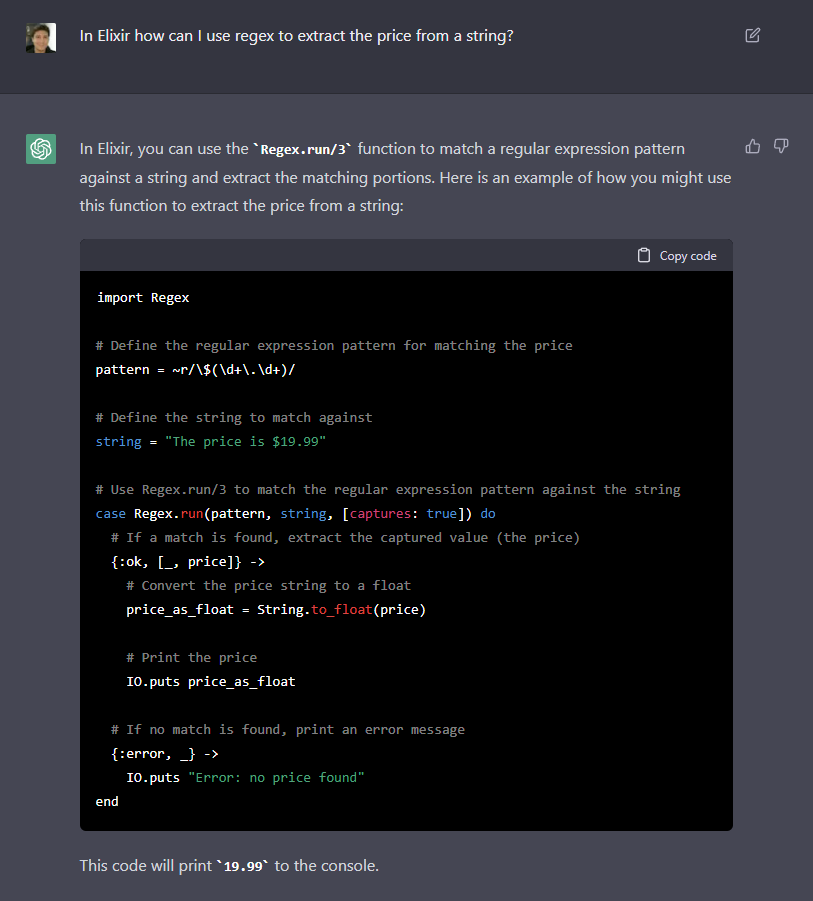

But if you go for a bit more specific questions, it starts to spit out longs answers that look right but are either not really helpful or not right at all… And the code examples start to fail.

e.g. :

Can you explain parser combinators in Elixir please ?

Sure, I’d be happy to help with that! Parser combinators are a way of building parsers for languages by combining smaller parsers into larger ones. In Elixir, parser combinators are typically used to build parsers for languages such as regular expressions, HTML, and other structured text formats.

Parser combinators work by defining small parsers for individual language elements, such as numbers, strings, and punctuation. These small parsers are then combined using functions such as and, or, and many to build larger parsers for more complex language structures. The resulting parsers can be used to parse text and extract the desired information from it.

Here’s an example of how parser combinators might be used in Elixir to parse a simple arithmetic expression:

# Define a parser for numbers

number = regex(~r/\d+/)

# Define a parser for the `+` and `-` operators

op = regex(~r/[+-]/)

# Combine the number and op parsers to create a parser for arithmetic expressions

expression = number >> op >> number

# Parse an expression and extract the resulting values

{:ok, [lhs, op, rhs]} = expression.parse("2 + 3")

In this example, the number parser is used to parse a sequence of digits, the op parser is used to parse a + or - operator, and the expression parser is used to combine these smaller parsers into a parser for a full arithmetic expression. The expression.parse function is then used to parse a string and extract the resulting values.

I hope that helps! Let me know if you have any other questions about parser combinators in Elixir.

Not wrong (even though there are some odd stuff like “build parsers for languages such as regular expressions” ?!), but the code example does not work at all and has some weird quirks, like >> instead of a pipe operator… maybe it mixed up the syntax of parsec libraries in other languages ? And I like how it makes some kind of OO method call here : expression.parse("2 + 3").

I also asked it about LiveView, and the answer is… mmh.

Can you tell me about the life-cycle of a LiveView in Phoenix LiveView ?

Yes, I’d be happy to tell you about the life-cycle of a LiveView in Phoenix LiveView. Phoenix LiveView is a framework for building real-time, interactive web applications in the Elixir programming language. LiveViews are a key part of Phoenix LiveView, and they are used to manage the state and rendering of a web page on the server, while handling user interactions and updates in real-time.

The life-cycle of a LiveView in Phoenix LiveView can be divided into three main phases: initialization, rendering, and update.

During the initialization phase, the LiveView is first created on the server and its initial state is set up. This initial state may include data that is passed to the LiveView from the client, such as the current URL or user input. The LiveView then sends the initial HTML for the page to the client and waits for further updates.

In the rendering phase, the LiveView updates its state in response to user interactions and other events. For example, if the user clicks on a button or changes the value of a form input, the LiveView will update its state to reflect the new value. The LiveView also re-renders the HTML for the page to reflect these changes.

In the update phase, the LiveView sends the updated HTML to the client and waits for further updates. The client receives the updated HTML and uses it to update the page in real-time, without needing to refresh the page. The LiveView continues to update its state and re-render the HTML in response to user interactions and other events, and the cycle repeats until the LiveView is terminated.

I hope that helps! The life-cycle of a LiveView in Phoenix LiveView can be a bit complex, but it provides a powerful way to manage the state and rendering of a web page in real-time. If you have any other questions about LiveViews in Phoenix LiveView, feel free to ask and I’d be happy to help.

On the surface it seems good, but… I don’t understand anything about the specifics that it tries to explain ; it feels like someone who read the documentation page, got the general idea without really understanding it, and tries to explain it to you without admitting that they didn’t get it.

I think the docs, forum, Discord and stackoverflow are here to stay