I think a bunch of us will be interested in hearing how the new M1 Pro/Max compare to the regular M1 when compiling and running Elixir code. If you have experienced any 2 of the 3, please share!

I have a pretty decked out M1 Max… not entirely sure what is going on, but to be honest, compilation times are pretty bad. My M1 Air is much faster.

I had both computers compile hexpm from scratch (including deps).

The MacBook Air (not even plugged in) did it in under 3 minutes.

The M1 Max took nearly 10 minutes.

I have a software/OS update queued up (12.0.1), so hopefully that helps. It could also be Spotlight is still indexing and slowing things down. I haven’t run anything really heavy (games/video editing) yet.

I did, “base” model Mac Book Pro, installed asdf Erlang in about 2 mins (no fans).

Everything is going according to plan so far.

Do you mind seeing how long it takes to compile (including deps) and run tests the hexpm project? i.e.:

mix setup

time mix test # <= this command

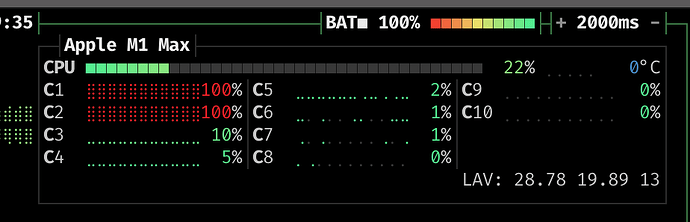

My sneaking suspicion is that the compiler is only using the two efficiency cores for some reason.

During compilation, bpytop looks like

mix test 16.23s user 3.92s system 142% cpu 14.111 total

The new M1s look great and I think I finally have to overcome my thinkpad-trackpoint-addiction.

For anyone that comes by later, you can dramatically speed up compilation times by either:

- Using the Homebrew version of Erlang

- Using the

KERL_CONFIGURE_OPTIONSfrom the Homebrew formula.

I used:

export KERL_CONFIGURE_OPTIONS="

--disable-debug \

--disable-silent-rules \

--enable-dynamic-ssl-lib \

--enable-hipe \

--enable-shared-zlib \

--enable-smp-support \

--enable-threads \

--enable-wx \

--with-ssl=$(brew --prefix openssl@1.1) \

--without-javac

--enable-darwin-64bit \

--enable-kernel-poll \

--with-dynamic-trace=dtrace \

"

my thinking is that --enable-darwin-64bit is doing the heavy lifting

Good find! Do you have a better comparison M1 vs M1 Max now?

That same compile that took 10 minutes now takes about 1:30, so about 2x faster than the macbook air.

I have just merged tried the ARM JIT PR (jit: Add support for 64-bit ARM processors by jhogberg · Pull Request #4869 · erlang/otp · GitHub) into the OTP 24.1.3 release, which gives me OTP 24 + JIT on ARM

You can try it out with the following (using ASDF):

export OTP_GITHUB_URL="https://github.com/qdentity/otp"

asdf install erlang ref:v24.1.3

asdf local erlang ref:v24.1.3

Running the snippet from https://twitter.com/akoutmos/status/1453558037063639040

{time, _} = :timer.tc(fn -> 1..5_000_000 |> Enum.reduce(0, fn number, acc -> acc + number end) end)

System.convert_time_unit(time, :microsecond, :millisecond)

finishes in 1s, which is very nice compared to the 1.7s the AMD Ryzen 9 3950X in the tweet achieved! Someone also ran it with the OTP 25 development branch and achieved the same same result (1s).

Other improvements to mention here: full recompile on a large project:

37 seconds (Macbook Pro 2017 best i7)

vs

23 seconds (Macbook Pro M1 16 inch 10-core)

Partial recompile on same project (recompiling 42 files):

17 seconds (Macbook Pro 2017 best i7)

vs

5 seconds (Macbook Pro M1 16 inch 10-core)

Overall, very nice speed-ups

Revisiting this, I’m also seeing issues with mix compile only really stressing the 2 efficiency cores. I have tried both OTP 24.1.5 and ref:master via asdf with those build flags. Anyone else seen this issue and worked past it?

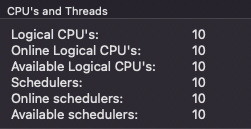

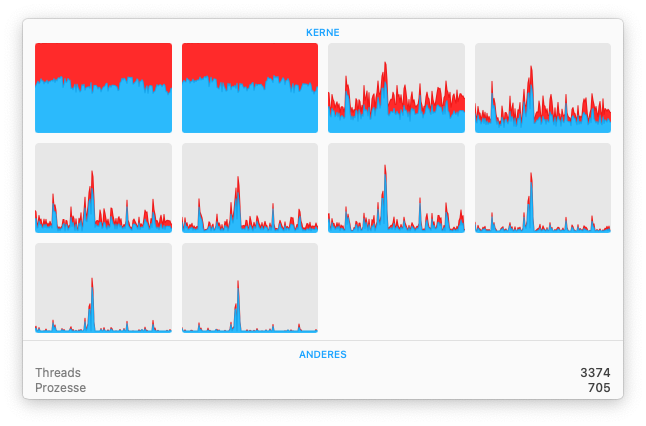

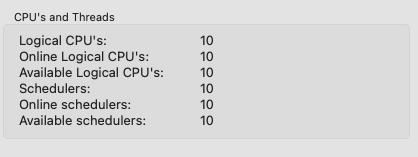

Just to confirm, did you try my fork (export OTP_GITHUB_URL="https://github.com/qdentity/otp") and then asdf install erlang ref:v24.1.3? What do you get when you run the snippet mentioned above? I noticed that when JIT is enabled, the custom KERL_CONFIGURE_OPTIONS are not needed to have the BEAM utilise all cores. And an additional question: what do you see when you run the observer, do you see something like this?

I am having the same issue. Looks like mix compile is only using 2 cores.

Still struggling with this… Compiling a phoenix application takes ages when the efficiency cores are heavily loaded while the performance cores seem to be largely idle. Using Erlang 25 (with JIT) installed using brew.

It does not only affect the compilation. These were requests to the login route that should never take 20s:

12:53:52.742 request_id=FvgostrHPGeka6oAAAXi [info] Sent 200 in 21043ms

12:53:52.742 request_id=FvgostrGFVHP8XUAAAZB [info] Sent 200 in 21044ms

12:53:52.742 request_id=Fvgoss8SmTg9ucYAAAWC [info] Sent 200 in 21240ms

12:53:52.769 request_id=FvgosqJW7qHXFQ8AAAYh [info] Sent 200 in 22017ms

This also does not seem to be consistent and only happens sometimes. Very strange.

A quick update here: I found the culprit!

I’ve got a cheap USB SSD case that was connected to the MacBook. As soon as it’s connected, any Elixir compile takes forever to complete and the two efficiency cores are maxed out. Disconnecting this device results in normal performance again. Very strange, I never experienced something like this before.

Interesting, I also encountered this slow down but recently I haven’t seen it. Although when I think about it, I did notice that time machine backups were running on my external disk each time this happened ![]()