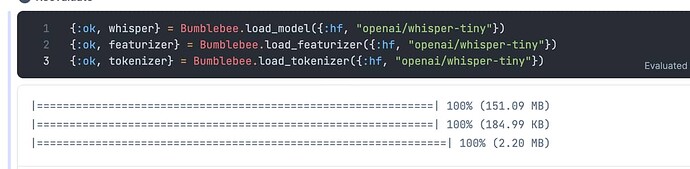

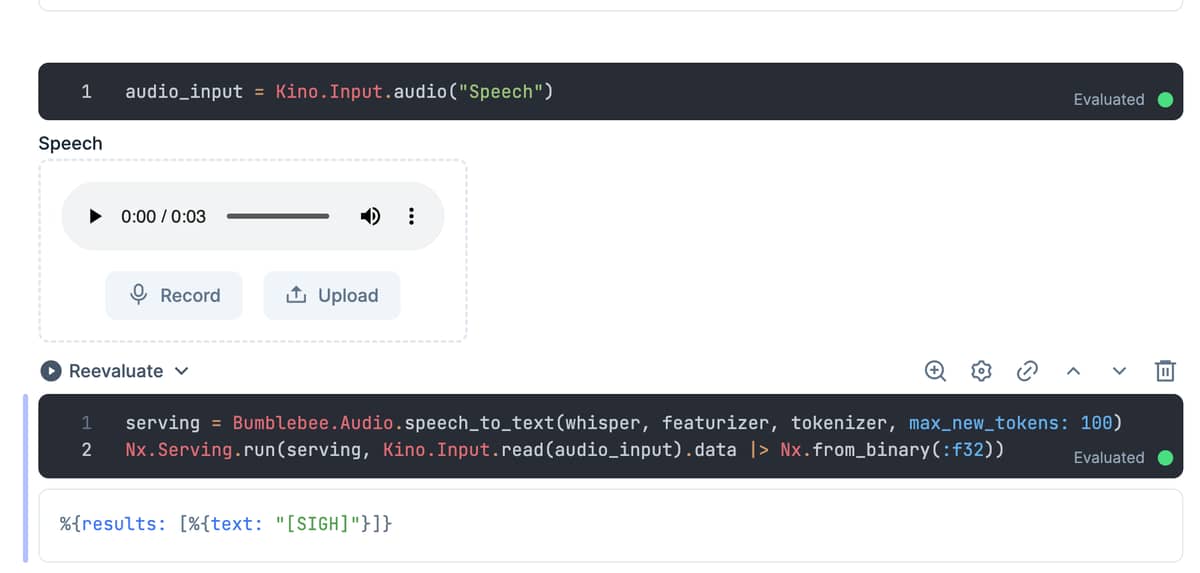

Hi, elixir forum. I’m using the whisper-tiny model to convert speech to text but I always get a bad result. I think it may be the format of the audio data, such as endianness. any idea?

It works well after changing the sampling rate to 16000!

audio_input = Kino.Input.audio("Speech", sampling_rate: 16000)

And I found this, whisper need use ffmpeg to resample the audio into 16000, so we need set the sampling rate to 16000 if not resampling.

Another question, how to set the “decoder id”? So we can recognize language other than english.

Got solution! You can config the forced_token_ids in whisper spec:

# set language to `zh`

forced_token_ids = [{1, 50260}, {2, 50359}, {3, 50363}]

whisper = put_in(whisper.spec.forced_token_ids, forced_token_ids)

The full list of tokens can be found here: added_tokens.json · openai/whisper-base at main