Hi everyone,

I didn’t want to “spam” the form with release announcements after my initial post about benchee back in June but I figured with all the changes and new features now might be a good time to write something again! If it’s too much, please tell me

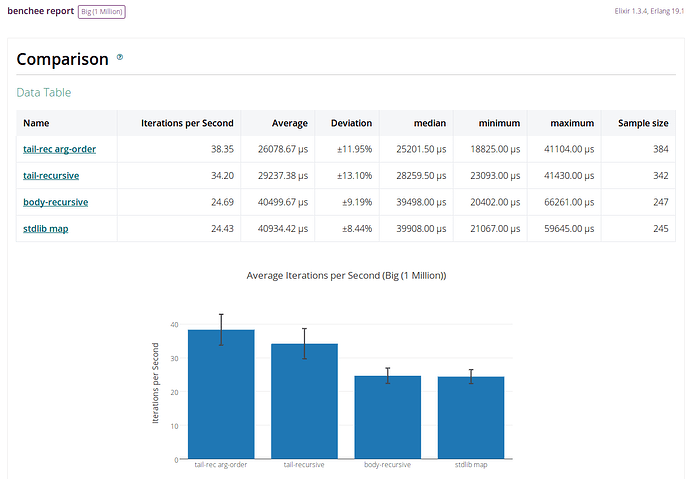

I just released new versions of my benchmarking library benchee along with benchee_csv, also introducing new formatters benchee_json and finally benchee_html to create nice HTML reports, with 4 different graphs that can also be exported as PNG images! And of course, there also is a blog post about all of it.

benchee has come a long way and I’m particularly excited about it supporting running your suite with different inputs as different implementations may behave differently depending on input size or structure. Also I changed the API of the main interface after a short but good discussion in this very forum. And I gotta say it looks way more elixir now

alias Benchee.Formatters.{Console, HTML}

map_fun = fn(i) -> i + 1 end

inputs = %{

"Small (10 Thousand)" => Enum.to_list(1..10_000),

"Middle (100 Thousand)" => Enum.to_list(1..100_000),

"Big (1 Million)" => Enum.to_list(1..1_000_000),

}

Benchee.run %{

"tail-recursive" =>

fn(list) -> MyMap.map_tco(list, map_fun) end,

"stdlib map" =>

fn(list) -> Enum.map(list, map_fun) end,

"body-recursive" =>

fn(list) -> MyMap.map_body(list, map_fun) end,

"tail-rec arg-order" =>

fn(list) -> MyMap.map_tco_arg_order(list, map_fun) end

}, time: 10, warmup: 10, inputs: inputs,

formatters: [&Console.output/1, &HTML.output/1],

html: [file: "bench/output/tco_detailed.html"]

This then produces outputs thanks to the HTML formatter as you can see in this example report or get a preview with this image:

So yeah, I hope you like it. Would be great to hear what you like, or even better what you are missing, not liking or bugs so that I can improve and extend benchee and its associated libraries

Thanks!

Tobi