Hi,

A few months ago @josevalim added idle_time to Ecto after I made this issue, which is great, but now I’m seeing queue times on the DB pool, with non-zero idle time.

If there is queueing time, or time spent waiting for a connection to feel up, I would expect the idle time for connections to be zero or very close to zero.

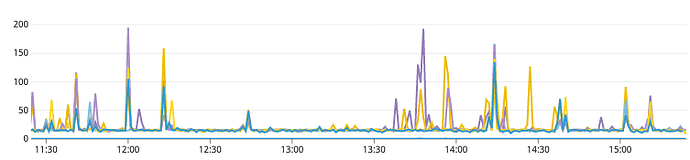

However I’m seeing regular spikes in queue time on a certain repo (ms on the y axis, different lines are different hosts):

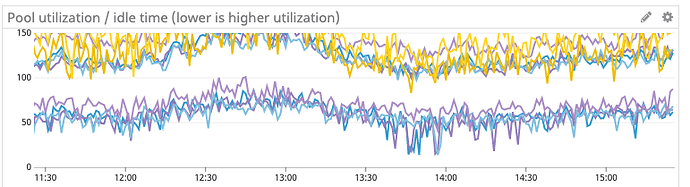

but the minimum idle time never comes close to zero (the lower band is minimum value, also in ms):

From the first graph, I’d assume I have to increase the DB pool size because of queueing. From the second graph, the pool size seems fine.

Am I misunderstanding something about how this should work or how the pool algorithm works?