Code snippets in diagnostics

Elixir v1.15 introduced a new compiler diagnostic format and the ability to print multiple error diagnostics per compilation (in addition to multiple warnings).

With Elixir v1.16, we also include code snippets in exceptions and diagnostics raised by the compiler. For example, a syntax error now includes a pointer to where the error happened:

** (SyntaxError) invalid syntax found on lib/my_app.ex:1:17:

error: syntax error before: '*'

│

1 │ [1, 2, 3, 4, 5, *]

│ ^

│

└─ lib/my_app.ex:1:17

For mismatched delimiters, it now shows both delimiters:

** (MismatchedDelimiterError) mismatched delimiter found on lib/my_app.ex:1:18:

error: unexpected token: )

│

1 │ [1, 2, 3, 4, 5, 6)

│ │ └ mismatched closing delimiter (expected "]")

│ └ unclosed delimiter

│

└─ lib/my_app.ex:1:18

Errors and warnings diagnostics also include code snippets. When possible, we will show precise spans, such as on undefined variables:

error: undefined variable "unknown_var"

│

5 │ a - unknown_var

│ ^^^^^^^^^^^

│

└─ lib/sample.ex:5:9: Sample.foo/1

Otherwise the whole line is underlined:

error: function names should start with lowercase characters or underscore, invalid name CamelCase

│

3 │ def CamelCase do

│ ^^^^^^^^^^^^^^^^

│

└─ lib/sample.ex:3

A huge thank you to Vinícius Muller for working on the new diagnostics.

Revamped documentation

Elixir’s Getting Started guided has been made part of the Elixir repository and incorporated into ExDoc. This was an opportunity to revisit and unify all official guides and references.

We have also incorporated and extended the work on Understanding Code Smells in Elixir Functional Language, by Lucas Vegi and Marco Tulio Valente, from ASERG/DCC/UFMG, into the official document in the form of anti-patterns. The anti-patterns are divided into four categories: code-related, design-related, process-related, and meta-programming. Our goal is to give all developers examples of potential anti-patterns, with context and examples on how to improve their codebases.

Another ExDoc feature we have incorporated in this release is the addition of cheatsheets, starting with a cheatsheet for the Enum module. If you would like to contribute future cheatsheets to Elixir itself, feel free to start a discussion with an issue.

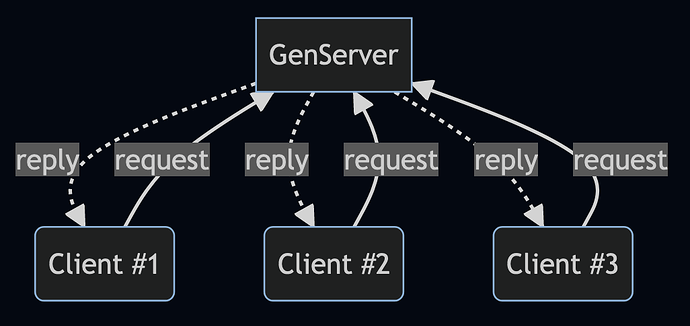

Finally, we have started enriching our documentation with Mermaid.js diagrams. You can find examples in the GenServer and Supervisor docs.

v1.16.0-rc.0 (2023-10-31)

1. Enhancements

EEx

- [EEx] Include relative file information in diagnostics

Elixir

- [Code] Automatically include columns in parsing options

- [Code] Introduce

MismatchedDelimiterErrorfor handling mismatched delimiter exceptions - [Code.Fragment] Handle anonymous calls in fragments

- [Code.Formatter] Trim trailing whitespace on heredocs with

\r\n - [Kernel] Suggest module names based on suffix and casing errors when the module does not exist in

UndefinedFunctionError - [Kernel.ParallelCompiler] Introduce

Kernel.ParallelCompiler.pmap/2to compile multiple additional entries in parallel - [Kernel.SpecialForms] Warn if

True/False/Nilare used as aliases and there is no such alias - [Macro] Add

Macro.compile_apply/4 - [Module] Add support for

@nifsannotation from Erlang/OTP 25 - [Module] Add support for missing

@dialyzerconfiguration - [String] Update to Unicode 15.1.0

- [Task] Add

:limitoption toTask.yield_many/2

Mix

- [mix] Add

MIX_PROFILEto profile a list of comma separated tasks - [mix compile.elixir] Optimize scenario where there are thousands of files in

lib/and one of them is changed - [mix test] Allow testing multiple file:line at once, such as

mix test test/foo_test.exs:13 test/bar_test.exs:27

2. Bug fixes

Elixir

- [Code.Fragment] Fix crash in

Code.Fragment.surround_context/2when matching on-> - [IO] Raise when using

IO.binwrite/2on terminated device (mirroringIO.write/2) - [Kernel] Do not expand aliases recursively (the alias stored in Macro.Env is already expanded)

- [Kernel] Ensure

dbgmodule is a compile-time dependency - [Kernel] Warn when a private function or macro uses

unquote/1and the function/macro itself is unused - [Kernel] Do not define an alias for nested modules starting with

Elixir.in their definition - [Kernel.ParallelCompiler] Consider a module has been defined in

@after_compilecallbacks to avoid deadlocks - [Path] Ensure

Path.relative_to/2returns a relative path when the given argument does not share a common prefix withcwd

ExUnit

- [ExUnit] Raise on incorrectly dedented doctests

Mix

- [Mix] Ensure files with duplicate modules are recompiled whenever any of the files change

3. Soft deprecations (no warnings emitted)

Elixir

- [File] Deprecate

File.stream!(file, options, line_or_bytes)in favor of keeping the options as last argument, as inFile.stream!(file, line_or_bytes, options) - [Kernel.ParallelCompiler] Deprecate

Kernel.ParallelCompiler.async/1in favor ofKernel.ParallelCompiler.pmap/2 - [Path] Deprecate

Path.safe_relative_to/2in favor ofPath.safe_relative/2

4. Hard deprecations

Elixir

- [Date] Deprecate inferring a range with negative step, call

Date.range/3with a negative step instead - [Enum] Deprecate passing a range with negative step on

Enum.slice/2, givefirst..last//1instead - [Kernel]

~R/.../is deprecated in favor of~r/.../. This is because~R/.../still allowed escape codes, which did not fit the definition of uppercase sigils - [String] Deprecate passing a range with negative step on

String.slice/2, givefirst..last//1instead

ExUnit

- [ExUnit.Formatter] Deprecate

format_time/2, useformat_times/1instead

Mix

- [mix compile.leex] Require

:leexto be added as a compiler to run theleexcompiler - [mix compile.yecc] Require

:yeccto be added as a compiler to run theyecccompiler