Hi! I’ve been working with Pipecat in Python for quite a while now, and while it’s effortless to set up, when building AI voice agents at scale, it starts to show its limitations. Pipecat has trouble with:

- concurrency - Pipecat uses asyncio, so only IO is concurrent. Elixir’s version is built on top of processes.

- frame reliability - If individual frame processor in pipecat crashes - it’s really tricky to recover it. With beam - we have it for free.

- error handling - Pipecat swallows errors, since it’s hard to propagate them across async calls. Monitoring can be tedious. In Elixir, we could use built-in OTEL spans.

- fault tolerance - crashes are not isolated, one crash can affect the whole system.

- simplicity - Since Python is OO, it’s especially challenging to trace state changes across all of that. And building on top of pipecat is extremely error-prone and difficult. Race conditions are inevitable.

Since I very much appreciate Elixir for its design and simplicity, especially for building these kinds of systems, I thought it might be a great idea to port pipecat to it.

Before starting on the implementation myself, I thought it might be worth it to create a PoC on how this could look like with Claude and ask all of you guys what you think. I don’t ask you to review the code itself yet - just the general concepts.

So here is what Claude (with a little guidance from me) came up to:

And here’s what the pipeline could look like:

Pipeline.new([

{Feline.Processors.VADProcessor, start_secs: 0.2, stop_secs: 0.8},

{Feline.Services.Deepgram.StreamingSTT, api_key: deepgram_key, sample_rate: @sample_rate},

{Feline.Processors.ConsoleLogger.UserInput, []},

{Feline.Processors.UserContextAggregator, context_agent: pair.agent},

{Feline.Services.OpenAI.StreamingLLM, api_key: openai_key, model: "gpt-4.1-mini"},

{Feline.Processors.AssistantContextAggregator, context_agent: pair.agent},

{Feline.Processors.ConsoleLogger.BotOutput, []},

{Feline.Processors.SentenceAggregator, []},

{Feline.Services.ElevenLabs.StreamingTTS,

api_key: elevenlabs_key, voice_id: voice_id, sample_rate: 24_000},

{Feline.Processors.AudioPlayer, sample_rate: 24_000}

])

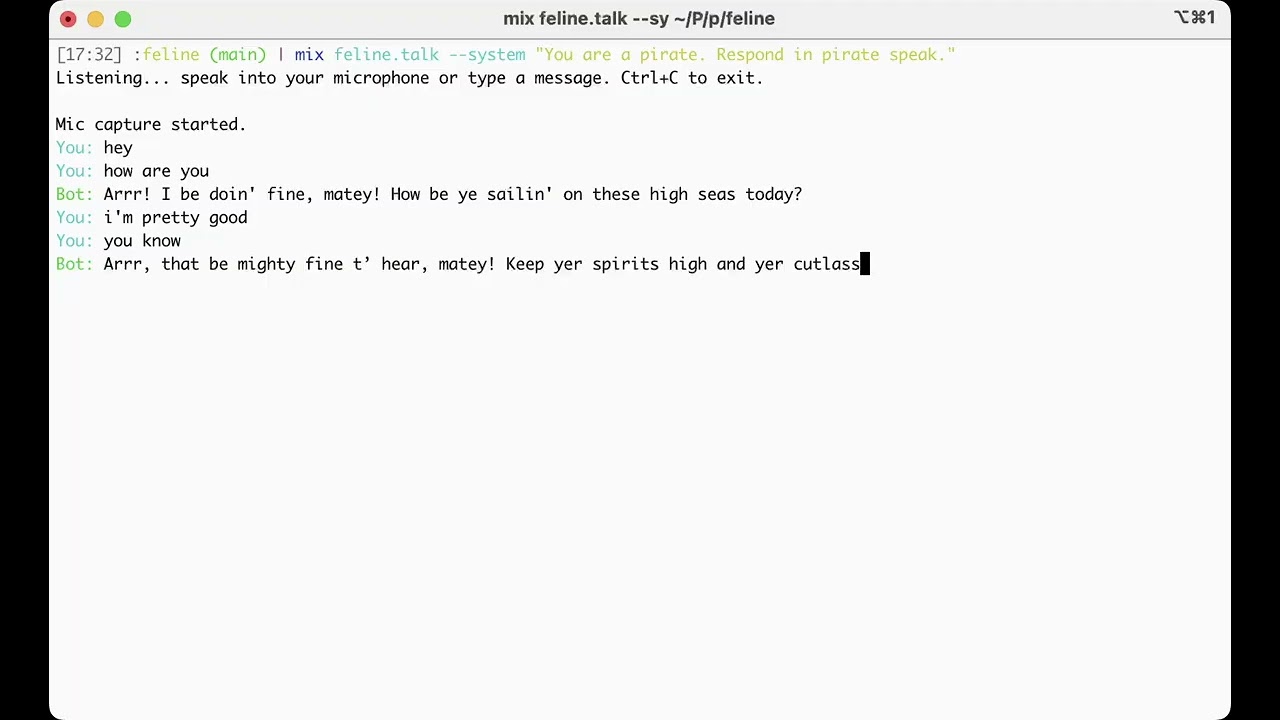

And here’s a demo on how the above works:

It’s a little similar in spirit to Membrane, but it aims at preserving very close compatibility with the original Pipecat library. Nevertheless, I already talked too much, so now I want to hear what you all think!