All in all, from what I understand, it is better not to use GenServer.cast when we want some concurrent operations to happen for sure, because we don’t know when the messages get lost in the air. So what is better to be used in case of concurrent operations? (I am trying to find all the Vampire Numbers in the range 1000000000 to 2000000000 using the power of concurrency)

This is true for any message sent. Erlang messaging between processes is like sending mail to a friend.

If you don’t receive a reply, you’ll never know if your letter got lost or the reply, or if your friend just didn’t sent it.

But still for such a thing I consider something like GenStage or Flow is probably the best approach.

It’s important to realize that on GenServer.call/3 processing in the calling process blocks:

- an asynchronous message is

sent - the calling process enters a blocking receive with a timeout

- the calling process unblocks when either the timeout expires (failing the call) or when the awaited return message arrives

So the calling process isn’t doing anything between the sent and return message.

because we don’t know when the messages get lost in the air.

Sometimes it makes more sense to monitor the process responsible and using a timeout to determine whether the result will be available (in time). That way the casting process doesn’t block (but the process interaction protocol is more work).

I tried using handle_cast everytime I was checking a number and I would update the state whenever I found a Vampire number. Later at the end I would make a Genserver.call to get the final state. But most of the time the genserver.call timed out. When I tried printing things inside handle_cast, I found that the request coming to handle_cast stopped coming even before the range (arg_n to arg_k) was over. Hence I was a little confused about the working of handle_cast and GenServer altogether. That led me to your post here. Code snippet below:

(arg_n..arg_k)

|> Enum.map(fn(each_n)

->

GenServer.cast(pid, {:check_vamp, each_n})

end

)

res = GenServer.call(pid, {:ash},5000)

IO.inspect res

I am now planning to spawn(__MODULE__, :fun_to_check_vamp, [number]). This will potentially create a new process for every number that will be checked in the range.

If I interpret your code correctly you were just piling up :check_vamp requests in pid process’s mailbox. It’s just a single process and code inside that process is strictly sequential.

Likely you where processing a request immediately and the next request wouldn’t be touched until the current request finished - so the process was too busy to run handle_cast again (while more and more requests sat in the mailbox). You might as well just have run your code within a single sequential process.

By the time you hit call/3 pid was hopelessly backed up with :check_vamp requests so that it couldn’t respond to call/3 within 5 seconds.

Please have a look at Task:

That being said these type of coding challenges typically rely on algorithmic improvements that will often outperform simple parallelization of brute force methods.

Thank you for that amazing realization! I was under the impression that because every request going to Genserver.cast is being handled asynchronously, so there will be no interaction between them and the code execution would be quicker, but the process was stopping abruptly in between.

I am definitely going to adopt the Task approach but for now I am running the following code for 10e7 to 2*10e7 and it is taking a long time as well.

(arg_n..arg_k)

|> Enum.map(fn(each_n) ->

pid = spawn(__MODULE__, :vamp_check, [each_n])

Process.monitor(pid)

receive do

msg -> msg

end

end)

I am afraid if I did something wrong. I have switched to check-till-squareRoot approach now instead of checking all permutations (which is a huge improvement when working on one number) but still, for that range it is taking some significant amount of time.

EDIT: It took 30 minutes!

You are still running sequentially.

You are spawning a single process then entering a blocking receive and waiting for the result from that process. Only after the blocking receive is exited can the code advance and spawn the next process.

Now the BEAM can handle a million processes but given the circumstances it isn’t efficient.

Have a look at Task.Supervisor.async_stream/6. It allows you to constrain the number of tasks to the number of schedulers/cores to have the most efficient execution.

https://medium.com/@dinojoaocosta/elixir-findings-asynchronous-task-streams-7f6336227ea

I am awestruck at the improvement! From 30 minutes to 7 minutes. Thanks again for this wonderful thing!

It gives us the freedom to decide the number of concurrent processes too (as you very generously highlighted). Just to make sure I am not leaving any other stone un-turned, here is what I am doing:

arg_n..arg_k

|> Task.async_stream(&Mix.Tasks.Boss.vamp_check/1, max_concurrency: System.schedulers_online)

|> Enum.map(fn {:ok, _result} -> nil end)

It is printing the result in vamp_check itself hence the nil in Enum.map.

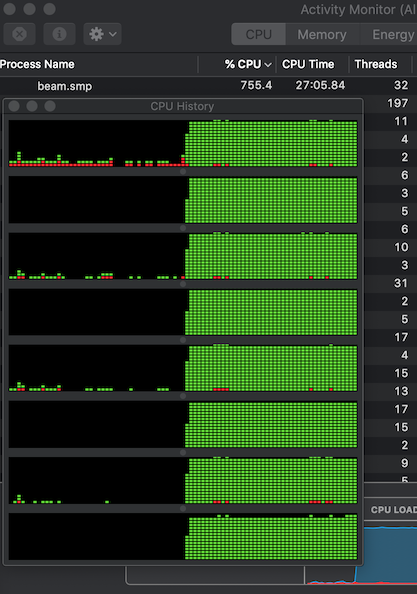

(To be noted that my laptop has System.schedulers_online=12)

I noticed something while execution of the individual processes - they use 11.5 of my 12 cores effectively. I am doing this:

(arg_n..arg_k)

|> Enum.map(fn(each_n) ->

pid = spawn(__MODULE__, :vamp_check, [each_n])

end)

(without the receive blocking this time).

It sounds very disadvantageous to know that when I use Task.Supervisor.async_stream or Task.async_stream (as above), I am only able to utilize maximum 2 cores effectively. Is there a reason for that? Can it be improved?

Here is my Task.Supervisor.async_stream code:

{:ok, supervisor} = Task.Supervisor.start_link()

Task.Supervisor.async_stream(supervisor, arg_n..arg_k, &Mix.Tasks.Boss.vamp_check/1,

max_concurrency: System.schedulers_online()

)

|> Enum.map(fn {:ok, _result} -> nil end)

I am using the time command in Debian shell on my Windows system to calculate the effective CPU cores being used ((user time+sys time)/realTime).

It’s kind of difficult to judge without access to your code - if for some reasons you used blocking operations those 12 processes may not be generating enough work to warrant more cores.

This script floods the cores of my 2016 MBP with work:

$ elixir demo.exs

Schedulers online: 8

Seconds: 265

[

{1001295697, [{19001, 52697}]},

{1001288794, [{10874, 92081}]},

{1001249680, [{10421, 96080}]},

{1001244420, [{24420, 41001}]},

{1001240352, [{25011, 40032}]},

{1001193790, [{10310, 97109}]},

{1001147422, [{24002, 41711}]},

{1001139282, [{11022, 90831}]},

{1001127442, [{14021, 71402}]},

{1001020252, [{20012, 50021}]},

{1000812510, [{12510, 80001}]},

{1000520064, [{20004, 50016}]},

{1000520010, [{20010, 50001}]},

{1000425010, [{25010, 40001}]},

{1000407528, [{12504, 80007}]},

{1000250010, [{20001, 50010}]},

{1000198206, [{10206, 98001}]},

{1000191991, [{10991, 91001}]},

{1000174288, [{14288, 70001}]}

]

$