During writing some code related to Arrays , I encountered a situation in which I wanted to map a function over a tuple.

Now, code that does this is very easy to write if you know exactly what size of tuple you have.

If you don’t… it becomes a little tedious, as Erlang supports tuples with up to 255 elements (EDIT: Turns out that nowadays it supports tuples with up to 2^24 number of elements… ![]() ).

).

But in Elixir, we can have the code write itself, with some simple metaprogramming:

defmodule TupleMap do

@max_tuple_size 255

def map_tuple({}, _fun), do: {}

def map_tuple({val}, fun), do: {fun.(val)}

def map_tuple({val1, val2}, fun), do: {fun.(val1), fun.(val2)}

# ... etc.

# But writing this by hand is tiresome. So instead:

for index <- 3..@max_tuple_size do

vars = Enum.map(1..index//1, fn index -> Macro.var(:"val#{index}", nil) end)

vars_tuple = {:{}, [], vars}

result_vars =

Enum.map(vars, fn var ->

quote do

unquote(Macro.var(:fun, nil)).(unquote(var))

end

end)

result_tuple = {:{}, [], result_vars}

def map_tuple(unquote(vars_tuple), fun) do

unquote(result_tuple)

end

end

# For comparison:

def map_tuple_through_list(tuple, fun) do

list = Tuple.to_list(tuple)

result_list = :lists.map(fun, list)

List.to_tuple(result_list)

end

end

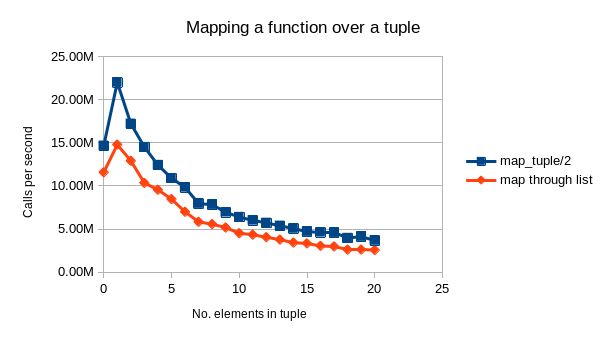

I ran a small benchmark on this code, for tuples with up to 20 elements (it would definitely be interesting to run a longer benchmark later).

The results are interesting: It seems that mapping over a tuple is ~30%-50% faster than mapping over a list.

The memory usage (not shown in this picture) is also only 1/4th of that of a list.

This benchmark is of course not very scientific, but I still thought it was interesting and something that might be nice to share.

I don’t know how frequent it is to map a function over all elements in a tuple, but if someone wants above code could easily be wrapped up in a library. Or even better: changed into a macro which includes a defp-implementation in a module that needs it, which will then guarantee that the tuple-call is inlined.

EDIT: I couldn’t help myself and have run a larger benchmark: ![]()