User send me a Recapcha code that google js file creates and then I send this code to the google api

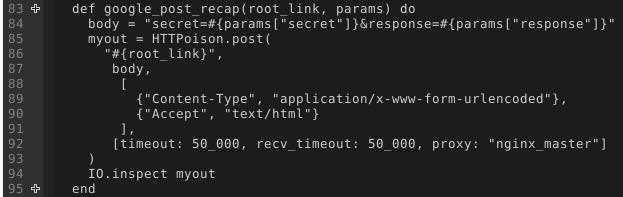

the code sender:

def google_post_recap(root_link, params) do

body = "secret=#{params["secret"]}&response=#{params["response"]}"

HTTPoison.post(

"#{root_link}",

body,

[

{"Content-Type", "application/x-www-form-urlencoded"},

{"Accept", "text/html"}

],

[timeout: 50_000, recv_timeout: 50_000]

)

end

and

# login sender

def login_register_sender(conn, %{"email" => _email, "password" => _password} = info_of_login) do

ip_os = TrangellHtmlSite.ip_os(conn)

info_map = User.login_mapper(info_of_login)

with {:ok, %HTTPoison.Response{status_code: 200, body: body_of_token}} <- TrangellHtmlSite.User.google_recap(info_of_login["g-recaptcha-response"]),

%{"challenge_ts" => _time, "hostname" => _host, "success" => true} <- Jason.decode!(body_of_token),

{:ok, %HTTPoison.Response{status_code: 200, body: _user_info}} <- User.login_sender(info_map, ip_os.ip, ip_os.os) do

conn

|> put_flash(:info, "کد فعال سازی دو مرحله ای برای شما ایمیل گردید لطفا به ایمیل خود مراجعه کرده و در فرم زیر کد مذکور به همراه ایمیل خود وارد کنید")

|> redirect( to: "/verify-code")

end

end

# google

def google_recap(response) do

TrangellHtmlSite.google_post_recap("https://www.google.com/recaptcha/api/siteverify", %{"secret" => "MY secret code", "response" => response})

|> IO.inspect

end

after those codes google send me a Json that I use like this

{:ok,

%HTTPoison.Response{

body: "{\n \"success\": true,\n \"challenge_ts\": \"2018-10-31T20:38:43Z\",\n \"hostname\": \"localhost\"\n}",

headers: [

{"Content-Type", "application/json; charset=utf-8"},

{"Date", "Wed, 31 Oct 2018 20:40:01 GMT"},

{"Expires", "Wed, 31 Oct 2018 20:40:01 GMT"},

{"Cache-Control", "private, max-age=0"},

{"X-Content-Type-Options", "nosniff"},

{"X-XSS-Protection", "1; mode=block"},

{"Server", "GSE"},

{"Alt-Svc", "quic=\":443\"; ma=2592000; v=\"44,43,39,35\""},

{"Accept-Ranges", "none"},

{"Vary", "Accept-Encoding"},

{"Transfer-Encoding", "chunked"}

],

request_url: "https://www.google.com/recaptcha/api/siteverify",

status_code: 200

}}

all of my code is that, I think that user sent code can be untrusted but google decides to verify or unverify

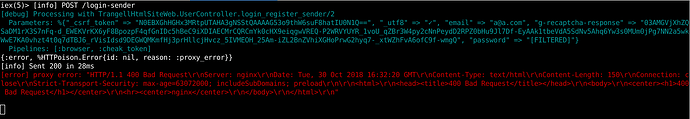

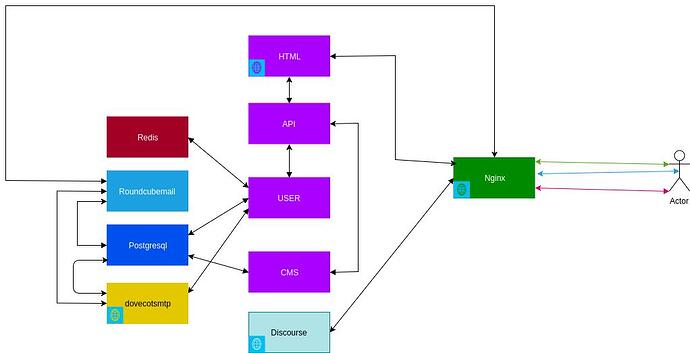

![]() . I have a problem to use external api in my services.

. I have a problem to use external api in my services.