Working for a new customer where microservices are used. So I’m reading a bit about service meshes, real time event driven gateways, etc etc. How complex all this is! There should be a compelling need to use it.

I think it all depends , how big your team, how you want to scale your solution.

If you can fit into monolith that’s fine , but try to build Netflix on monolith good luck

Large companies like Google, Facebook and Twitter happen to have monolithic repos too. Who knows what their deploy process is like, but having your code base in 1 repo definitely helps keep things under control.

I remember working on a project where we had 100+ repos and it was a nightmare 4-5 years ago. This was in the context of Ansible, but just the logistics of keeping readme files consistent across repos was enough pain to warrant developing custom scripts to manage that. Couldn’t imagine a real code base.

In Elixir you can have the best of both worlds then… Umbrellas are in a single repo ![]()

You can have a single repo and still use microservices.

Moving from monolith to microservices means you are moving to area of distributing systems.

From free eBook: Designing Distributed Systems by Brendan Burns (very good book)

https://azure.microsoft.com/en-us/resources/designing-distributed-systems/

Though it is clear as to why you might want to break your distributed application into a collection of different containers running on different machines, it is perhaps somewhat less clear as to why you might also want to break up the components running on a single machine into different containers. To understand the motivation for these groups of containers, it is worth considering the goals behind containerization. In general, the goal of a container is to establish boundaries around specific resources (e.g., this application needs two cores and 8 GB of memory). Likewise, the boundary delineates team ownership (e.g., this team owns this image). Finally, the boundary is intended to provide separation of concerns (e.g., this image does this one thing).

All of these reasons provide motivation for splitting up an application on a single machine into a group of containers. Consider resource isolation first. Your application may be made up of two components: one is a user-facing application server and the other is a background configuration file loader. Clearly, end-user-facing request latency is the highest priority, so the user-facing application needs to have sufficient resources to ensure that it is highly responsive. On the other hand, the background configuration loader is mostly a best-effort service; if it is delayed slightly during times of high user-request volume, the system will be okay. Likewise, the background configuration loader should not impact the quality of service that end users receive. For all of these reasons, you want to separate the user-facing service and the background shard loader into different containers. This allows you to attach different resource requirements and priorities to the two different containers and, for example, ensure that the background loader opportunistically steals cycles from the user-facing service whenever it is lightly loaded and the cycles are free. Likewise, separate resource requirements for the two containers ensure that the background loader will be terminated before the user-facing service if there is a resource contention issue caused by a memory leak or other overcommitment of memory resources.

In addition to this resource isolation, there are other reasons to split your single-node application into multiple containers. Consider the task of scaling a team. There is good reason to believe that the ideal team size is six to eight people. In order to structure teams in this manner and yet still build significant systems, we need to have small, focused pieces for each team to own. Additionally, often some of the components, if factored properly, are reusable modules that can be used by many teams. Consider, for example, the task of keeping a local filesystem synchronized with a git source code repository. If you build this Git sync tool as a separate container, you can reuse it with PHP, HTML, JavaScript, Python, and numerous other web-serving environments. If you instead factor each environment as a single container where, for example, the Python runtime and the Git synchronization are inextricably bound, then this sort of modular reuse (and the corresponding small team that owns that reusable module) are impossible.

Finally, even if your application is small and all of your containers are owned by a single team, the separation of concerns ensures that your application is well understood and can easily be tested, updated, and deployed. Small, focused applications are easier to understand and have fewer couplings to other systems. This means, for example, that you can deploy the Git synchronization container without having to also redeploy your application server. This leads to rollouts with fewer dependencies and smaller scope. That, in turn, leads to more reliable rollouts (and rollbacks), which leads to greater agility and flexibility when deploying your application.

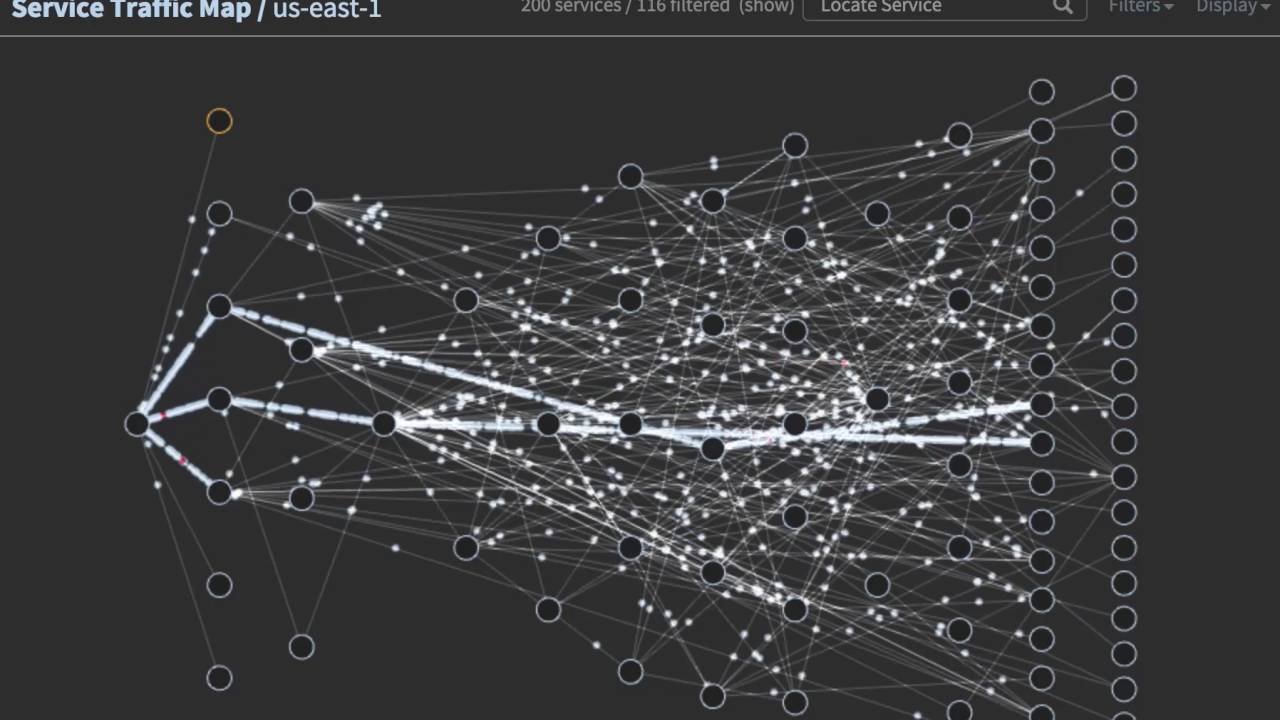

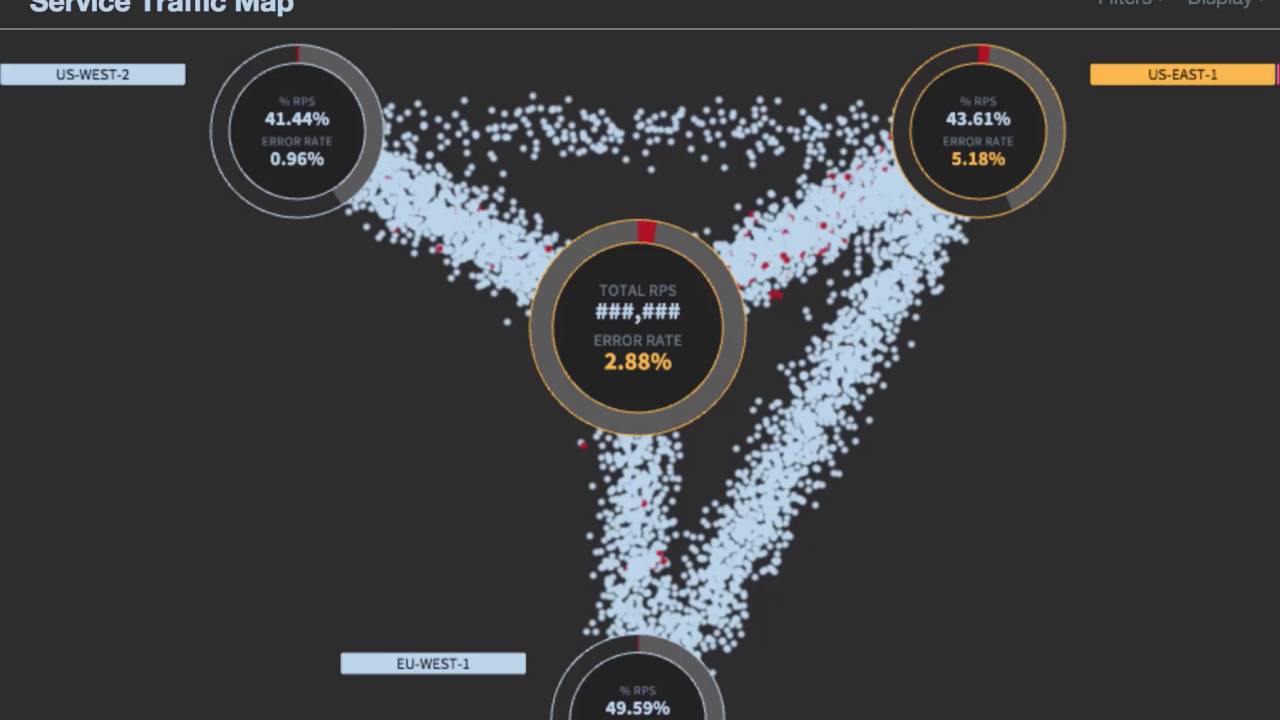

Netflix tool for visualize micro services traffic

https://medium.com/netflix-techblog/vizceral-open-source-acc0c32113fe