I’m currently trying to use livebook to work with the suite of ex_cldr packages, but I’m tripping over the automatic execution of doctests.

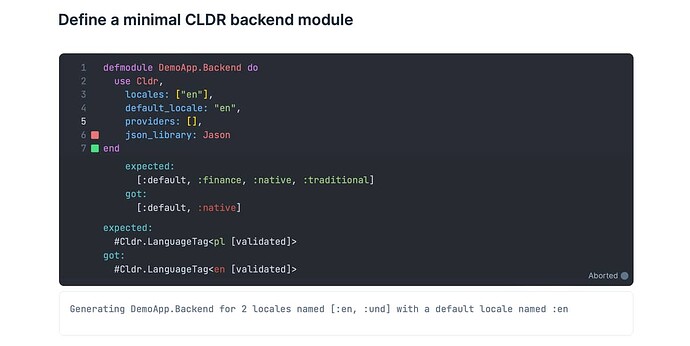

E.g. for a minimal ex_cldr backend module definition, I’m seeing this error when the cell executes:

Evaluation process terminated - an exception was raised:

** (MatchError) no match of right hand side value: []

lib/livebook/runtime/evaluator/doctests.ex:57: Livebook.Runtime.Evaluator.Doctests.report_doctest_result/2

lib/livebook/runtime/evaluator/doctests.ex:27: anonymous fn/2 in Livebook.Runtime.Evaluator.Doctests.run/2

(elixir 1.14.5) lib/enum.ex:975: Enum."-each/2-lists^foreach/1-0-"/2

lib/livebook/runtime/evaluator/doctests.ex:24: Livebook.Runtime.Evaluator.Doctests.run/2

lib/livebook/runtime/evaluator.ex:465: Livebook.Runtime.Evaluator.continue_do_evaluate_code/6

lib/livebook/runtime/evaluator.ex:330: Livebook.Runtime.Evaluator.loop/1

(stdlib 4.3) proc_lib.erl:240: :proc_lib.init_p_do_apply/3

The problem is that use Cldr in the backend module generates a bunch of code that includes doctests. And usually those doctests would pass, but the test setup does not seem to be replicated 100% when the code is generated in this way.

Would it make sense to add a flag for skipping doctests in cases like these?