FWIW, my personal belief is that no one knows enough about software development to be able to make rules: it’s just too young a discipline.

But, I also believe that:

-

defining helper functions as private sets a demarcation layer in my code: if the code is to be used by a third party, then making a function private means that I’m free to change it without worrying about unintended consequences.

-

tests can be helpful for some functions. Which ones? The ones where there’s doubt; the ones which are pivotal to the rest of the code; the ones where the tests help you explore the problem.

These two things do not necessarily correlate. I often find myself writing internal (private) functions which need tests.

“But, Dave” say those in the never-test-a-private-function camp. “Why not just test them indirectly via the public functions that use them?”

Because, I say (outside of quotes), that is often a lot of work. The higher level the function, the more state it assumes, and the harder the test is to write. And, typically, when you invoke these higher level functions, you’re calling a number of lower-level ones. The test loses focus.

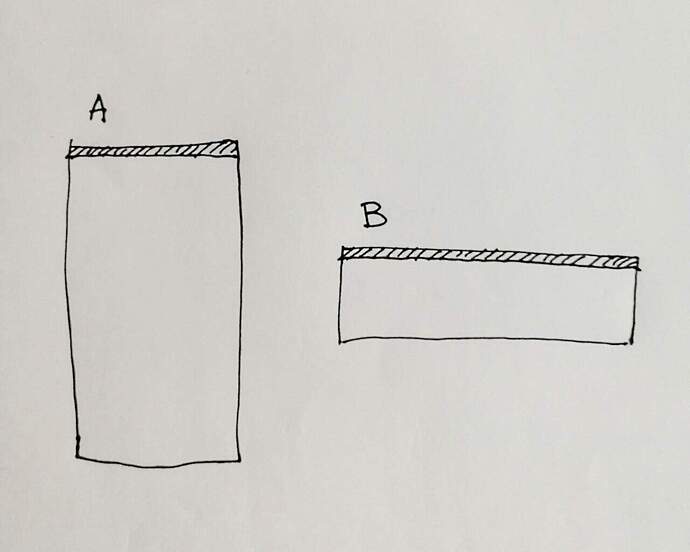

Let’s look at this a different way. Let’s think about the demarcation of code in GenServers.

When I write GenServers, I typically split them into two files. One file contains the server stuff: start_link, init, and all the handle_xxx calls, the other contains the implementation.

Here’s part of a typical server:

defmodule ExMdns.Server.ReceiveDNSPackets do

alias ExMdns.Impl.PubSub

alias ExMdns.Impl.ReceiveDNSPackets, as: Impl

use GenServer

require Logger

@me __MODULE__

def start_link(_) do

GenServer.start_link(__MODULE__, [], name: @me)

end

def init(_) do

PubSub.join()

{ :ok, Impl.initial_state }

end

def handle_info({ :pubsub, msg=%PubSub.PacketReceived{}}, state) do

state = Impl.handle_incoming_packet(state, msg.data)

{ :noreply, state }

end

def handle_info({ :pubsub, _ }, state), do: { :noreply, state }

end

None of these functions contain any logic (apart from pattern matching on their parameters. Instead, they delegate to a second module that contains the actual logic.

The functions in this second module contain the implementation of the logic behind the server. The server module calls an implementation function with the server state as a parameter, along with any additional parameters from the handle_xxx. The implementation function returns an updated state.

Here’s the implementation corresponding to that server:

defmodule ExMdns.Impl.ReceiveDNSPackets do

alias ExMdns.Impl.PubSub

require Logger

def initial_state() do

[]

end

def handle_incoming_packet(state, raw_packet) do

try do

DnsPackets.decode(raw_packet.data)

|> publish_replies()

rescue

e ->

Logger.error("Ignoring invalid DNS packet: #{inspect raw_packet}")

Logger.error(inspect e)

end

state

end

defp publish_replies(packet) when packet.header.qr == :reply do

for answer <- packet.answers do

PubSub.publish(%PubSub.AnswerReceived{ answer: answer })

end

end

defp publish_replies(packet) do

Logger.info("ignoring query\n#{inspect packet, pretty: true}")

end

end

Why is this relevant to testing? Because I can test the implementation functions standalone, without firing up a GenServer. And it’s easy to do, because my tests just create the state to pass in, and then verify the state that comes back. In fact, I rarely run any genservers in test: My application.ex looks like this:

def start(_type, _args) do

children = case Mix.env do

:test ->

[ ]

_ ->

[

Server.Cache,

Server.ReceiveDNSPackets,

Server.UdpInterface,

# Registration,

]

end

opts = [strategy: :one_for_one, name: ExMdns.Supervisor]

Supervisor.start_link(children, opts)

end

What has this to do with private functions?

It’s an illustration of how I believe that testing convenience trumps following rules.

Typically, Elixir developers would put the implementation functions in the same module as the server stuff. They’d then struggle with whether they should be private. Purity insists that they should: they are accessed via the GenServer.call interface, and so shouldn’t be exposed externally. Make 'em private, and then test via GenServer calls. Or, make 'em public, test them directly, and feel guilty.

Instead, this two-module approach sidesteps the dogma. In my apps, nothing in a subdirectory of lib/ should be accessed externally. Instead, I have a top-level api.ex that delegates each API function, calling .Server files if the implementation of the function uses a GenServer, and calling the implementation files directly for stateless functions.

It then becomes immediately obvious to any external user of the code what they’re allowed to use: anything with more than one module name in the path is internal to my application. It may be public, but that’s for my convenience and not for your use.

Do I do this consistently? No: to be honest I’m experimenting with each app I write. This is where I am now.

TLDR; forget what people tell you is right. Do what you feel makes your code easier to work with.