Hello @arkgil, I’ve come across your work on the Telemetry “ecosystem” from this tweet:

https://twitter.com/_arkgil/status/1084454450415190016

I’ve been playing a little bit with :telemetry, Telemetry.Poller and Telemetry.Metrics (which has been released on hex.pm in the meantime).

I really like what I’ve see so far: a simple abstraction for instrumentating, aggregating and dispatching metrics

I’m developing a Git platform alà GitHub and currently I’m diving into error and metric tracking.

My first attempt was to rely on the Influx ecosystem (InfluxDB, Chronograf, Kapacitator) with the help of the Exometer library. Recently, I tried different services such as New Relic and AppSignal.

Still, I would rather roll-out my own metric tracking system than having to rely on 3rd-party services.

Ecto 3.0 already uses :telemetry. I’m also confident that Phoenix will integrate :telemetry for HTTP/Websocket instrumentation in a near future. Other libraries such as Absinthe might also do so as well soon.

For me, the really nice thing about Telemetry is that I don’t have to clutter existing code with a lot of metric related stuff (aggregation, third-party service integration). I can have a totally separated application containing metric related code and voilà.

Right now, it feels like the ecosystem is in it first steps and I would love to see more documentation (hexdocs.pm, blog posts, code examples, etc.).

In your tweet, you talk about a Phoenix fork. Do you have to fork some Phoenix internals or only provide your own Telemetry instrumenter?

I’ve been also using your Telemetry StatsD implementation to try things out and would really appreciate if you could provide a basic Phoenix/Ecto integration example.

Also, most metric tracking services I’ve used so far use something like a “transaction” in order to group different metrics together.

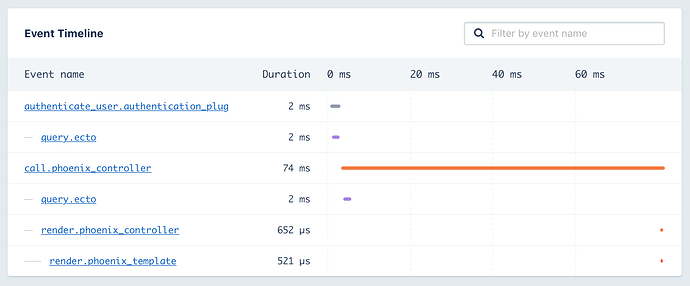

For example, AppSignal can give me an event timeline as follow:

It also offers the possibility to attach metadata (such as the authenticated user for example) to each transaction.

Could something like a transaction be implemented in a similar manner than Telemetry.Metrics? Let’s say we want to provide an event timeline like in my screenshot below. How can I group/categorize :telemetry events in order to know that the incoming Ecto query event is part of the Phoenix controller block?

Thank you in advance, and kudos for the great work so far. I’m really impressed

The new version uses Telemetry 0.4.0, which means that it can emit multiple measurements in a single event. For example, all the memory measurements are carried in a signle event now.

The new version uses Telemetry 0.4.0, which means that it can emit multiple measurements in a single event. For example, all the memory measurements are carried in a signle event now.