Is there any list of hardware, i.e. GPU that is compatible with Nx/Axon/etc. (apologies if I missed this information)? Does the general “rule” that any Nvidia is a good match?

Great question - I’ve been wondering the same!

Does anyone know if the built in GPU of a MacBook Pro would be ok or would I need to get an eGPU? My Mac has an AMD Radeon Pro 5500M 8 GB (and Intel UHD Graphics 630 1536 MB).

Related thread for external GPUs: Which external GPU are you using for Nx/Axon?

Doesn’t Nx backends use NIFs under the hood? For example the XLA backend there is a clear specification . The specifics of XLA backend is using CUDA so you most probably have to backtrack to that dependency, but this is only applicable to NVIDIA graphics cards. The way I see it there is still a big gap between official efficient universal drivers on unix systems, as graphics cards main market is currently gaming, witch is not existent on unix systems, this will change with time however this is a sensible topic as writing drivers for such kind of devices should take a lot of resources.

We definitely need more info on this, I can’t have been the only one thinking any OpenCL card probably would have been ok, even if not as performant as some of the others.

So basically it’s as @bdarla said, we need a modern Nvidia card?

Which NVIDIA cards are CUDA?

CUDA works with all Nvidia GPUs from the G8x series onwards, including GeForce, Quadro and the Tesla line. CUDA is compatible with most standard operating systems.

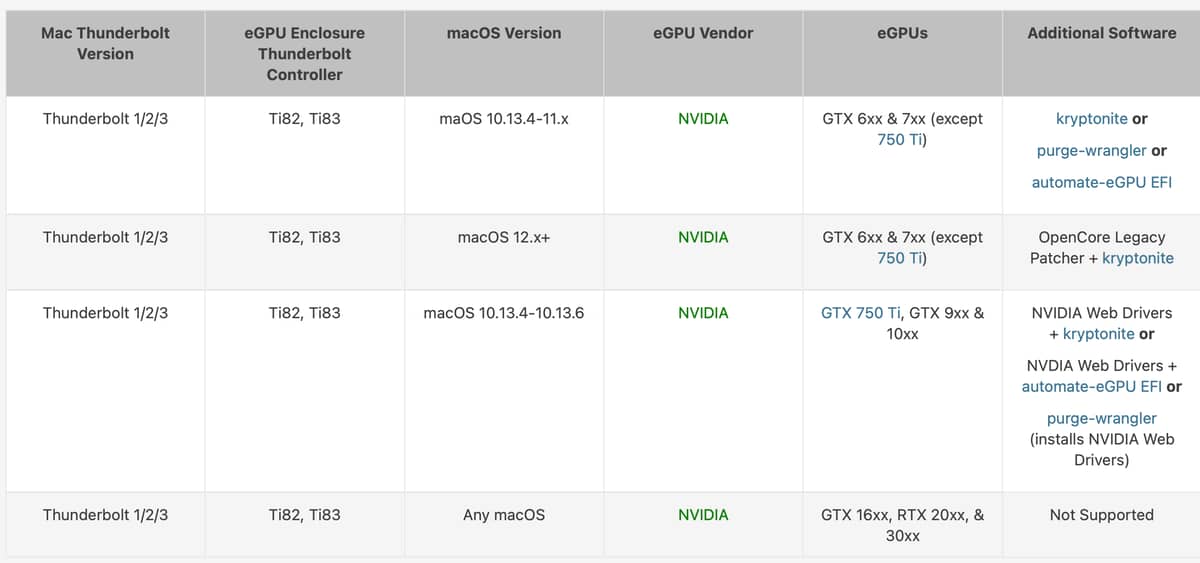

And from the site in the eGPU thread that’s these (for Mac users):

How do you decide between them? Does the ‘additional software’ column matter if it’s just going to be for Nx?

XLA also supports AMD’s ROCm, but Nvidia/Cuda is the way to go?

AMD ROCm is the first open-source software development platform for HPC/Hyperscale-class GPU computing. AMD ROCm brings the UNIX philosophy of choice, minimalism and modular software development to GPU computing.

Which AMD cards support ROCm?

ROCm officially supports AMD GPUs that use following chips:

- GFX9 GPUs

- “Vega 10” chips, such as on the AMD Radeon RX Vega 64 and Radeon Instinct MI25

- “Vega 7nm” chips, such as on the Radeon Instinct MI50, Radeon Instinct MI60 or AMD Radeon VII, Radeon Pro VII

- CDNA GPUs

- MI100 chips such as on the AMD Instinct™ MI100

- MI200 such as on the AMD Instinct™ MI200

- RDNA GPUs

- Radeon Pro V620

- Radeon 6800 Workstation

so is there anything I have to do, like, on Linux, with AMD GPU to get the Nx working? I never touched it but may be interested.

As a beginner / student in this I like to see other practitioners’ information (I’m assuming some are on a low-budget and are thoughtful about what is worth spending money on).

One such video demonstrating benchmarking ML using an older graphics card vs a newer one is this one:

I’m in the same boat as you Hubert, but from my understanding there are some notable differences between ROCm and Cuda; ROCm is opensource but Nvidia Cuda cards are said to be more performant and have better compatibility/support (they’ve been around for 10 years).

AMD brought out GPUFORT as a direct competitor to Cuda last year:

This new GPUFORT project will live under the Radeon Open eCosystem (ROCm) umbrella and is their latest endeavor in helping developers with large CUDA code-bases transition away from NVIDIA’s closed ecosystem.

There is already HIPify and other efforts made by AMD the past several years to help developers migrate as much CUDA-specific code as possible to interfaces supported by their Radeon open-source compute stack. Most of those efforts to date have been focused on C/C++ code while GPUFORT is about taking CUDA-focused Fortran code and adapting it for Radeon GPU execution.

Source: AMD Publishes Open-Source "GPUFORT" As Newest Effort To Help Transition Away From CUDA - Phoronix

It also seems that ROCm doesn’t work on all AMD cards (even newer ones) and that AMD are still figuring out their game, whereas as most Nvidia cards (even consumer models) from the last 10 years or so are Cuda compatible, this has allowed Cuda to dominate the space… which makes Nvidia cards/Cuda the safe bet.

That’s the impression I’m left with anyway, maybe someone with more experience can let us know their thoughts ![]()

What’s the tldr David? Are newer cards better?

This AMD ROCm thing is wild, my old GPU that I just boxed month ago is supported and the new one is not? Pfff

TLDR

Whisper model finished in 1 minute on newer card vs 6 mins on older card vs 22 mins on CPU only

The guy is very impressed with his 12gb RTX 3060

I got to realise (from a bit of digging) that bigger RAM size is more important (to me) than incremental increase in GPU speed since, as per another comment, you can load larger models and get more accurate results - or can even complete the run of a large model ( viz on apple M1 max which has a ton of shared RAM running pytorch Running PyTorch on the M1 GPU)

But then this project sounds better - GitHub - bigscience-workshop/petals: 🌸 Easy way to run 100B+ language models without high-end GPUs. Up to 10x faster than offloading

TLDR shared access to GPUs to run big models

From the provided link on the “petals” project:

System requirements: Petals only supports Linux for now. If you don’t have a Linux machine, consider running Petals in Docker (see our image) or, in case of Windows, in WSL2 (read more). CPU is enough to run a client, but you probably need a GPU to run a server efficiently.

I am afraid using Apple Silicon based machines is not yet a promising direction (unfortunately). Nevertheless, thank you for sharing this information!

So I’ve browsed a few more posts/articles about ML on Macs and the vibe I’m getting is that if you’re a Mac user and need CUDA, don’t bother ![]() instead:

instead:

-

Use AWS or some cloud service (about $3 an hour).

-

Build a Linux workstation and just SSH into it.

-

Or if you want to use your Mac, get something like a Razer Core X and dual boot into Linux or windows (or run from an ssd) - and don’t get a high end GPU unless you really need it (wait for them to come down in price or see what happens in the space).

Does that sound about right? Maybe things have moved along since?

I’ve done the same - I have a Fedora 37 box with a 1080 in it. I’ve used linux on and off since '96 and at the moment it’s finally feeling usable without a lot of tinkering.

My problem now is that Fedora 37 ships with CUDA 12 and everything else seems to be stuck on 11.7/11.8. Sigh ![]() Maybe someday this will all work easily.

Maybe someday this will all work easily.

Awesome - perhaps if you get a minute you could write a blog post or guide to help others get set up?

Also what do you think you might use for production? (I wrote up some options here :D)

I got this working with the RTX 3060 and found it was painless if you can install PopOS and the nvidia drivers + cuda 11.2. I also recorded a short benchmark and included the side by side of fine tuning with CPU /GPU to give people a sense of how much faster the feedback loop is when you bolt on this nvidia GPU

https://toranbillups.com/blog/archive/2023/04/29/training-axon-models-with-nvidia-gpus/

I installed an RTX 3060 in a Thunderbolt eGPU case. Also upgraded my Ubuntu dev box to an 16-core i7 / 64GB ram and 4TB NVME drive.

I’ve come to believe that this is a minimal configuration for local ML development, for small training runs and to generate embeddings for prototype apps.

Nvtop has been super useful to verify GPU operation. Besides Nx, Ollama has been a great tool for experimentation and learning, and gives a restful interface for integration with Elixir apps.

To generate one embedding with my setup, it takes ~90 seconds on the CPU, and ~2.7 seconds on the GPU.

Now and then I exceed memory capacity of the 3060 GPU (12GB/~$300). The workaround is to use smaller models, good enough for experimentation and prototyping.

The 3090 GPU (24GB / ~$1500) has 2x more ram but 5x more expensive.

Beyond that Fly.io has on-demand Nvidia A100s - 40GB @ $2.50/hr, 80GB @ $3.50/hr.