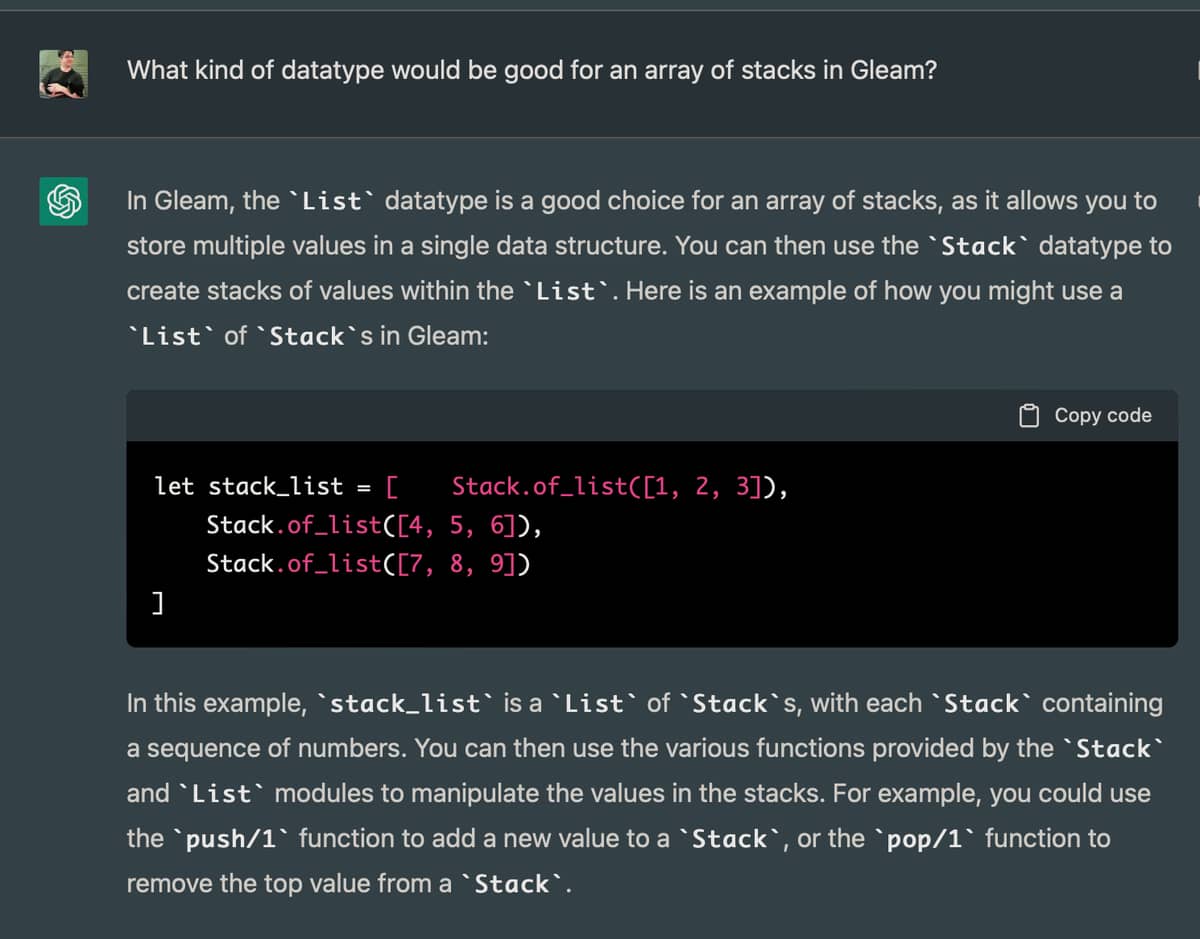

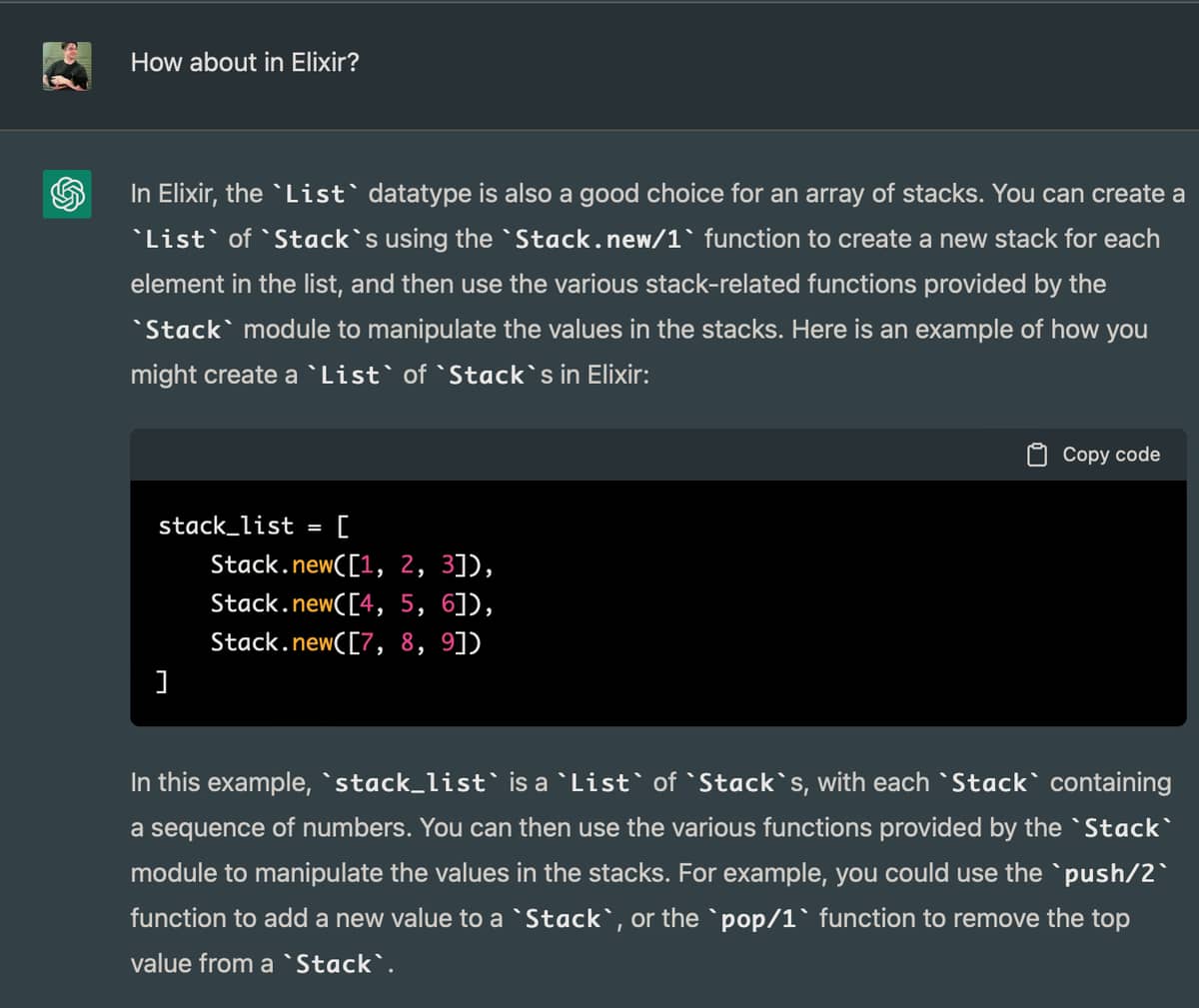

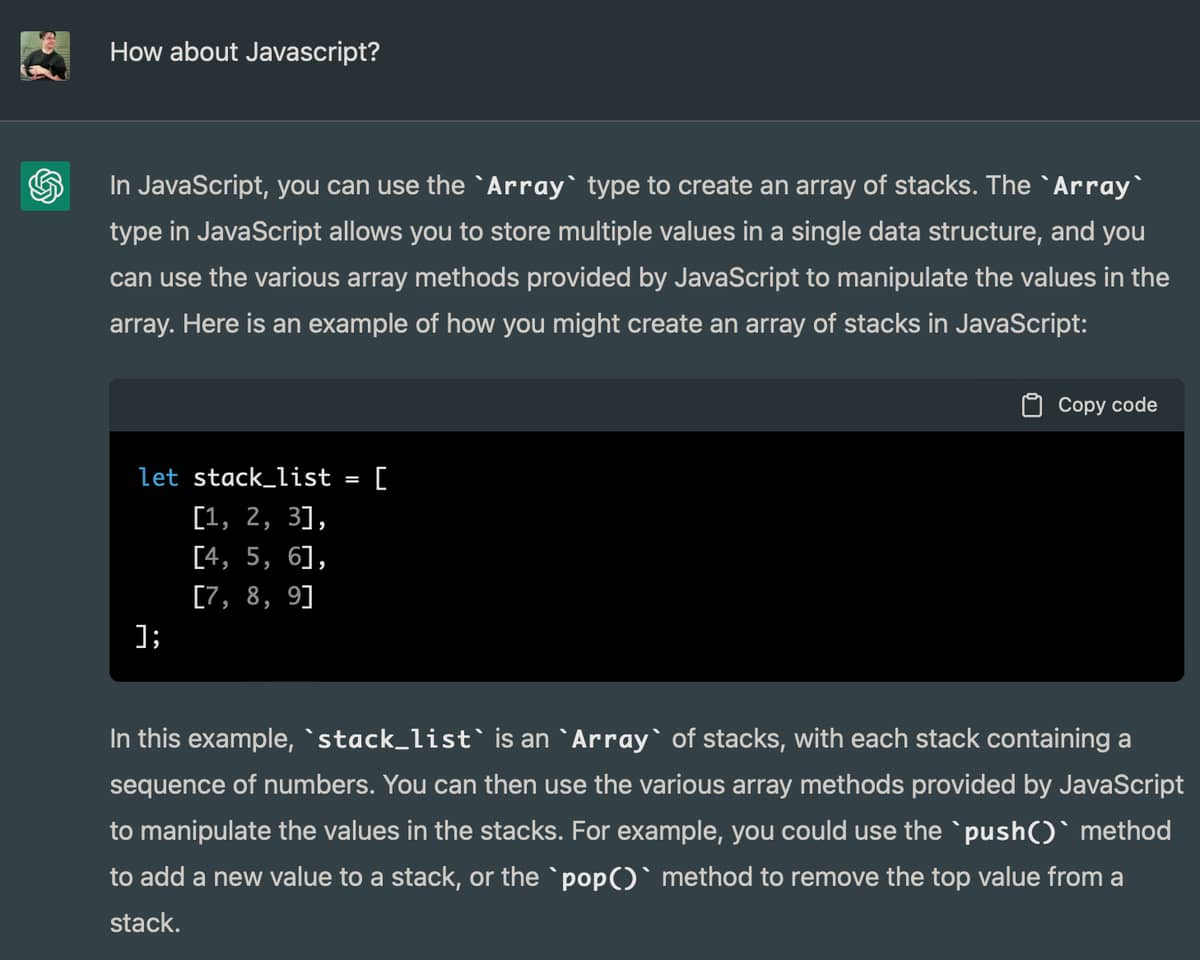

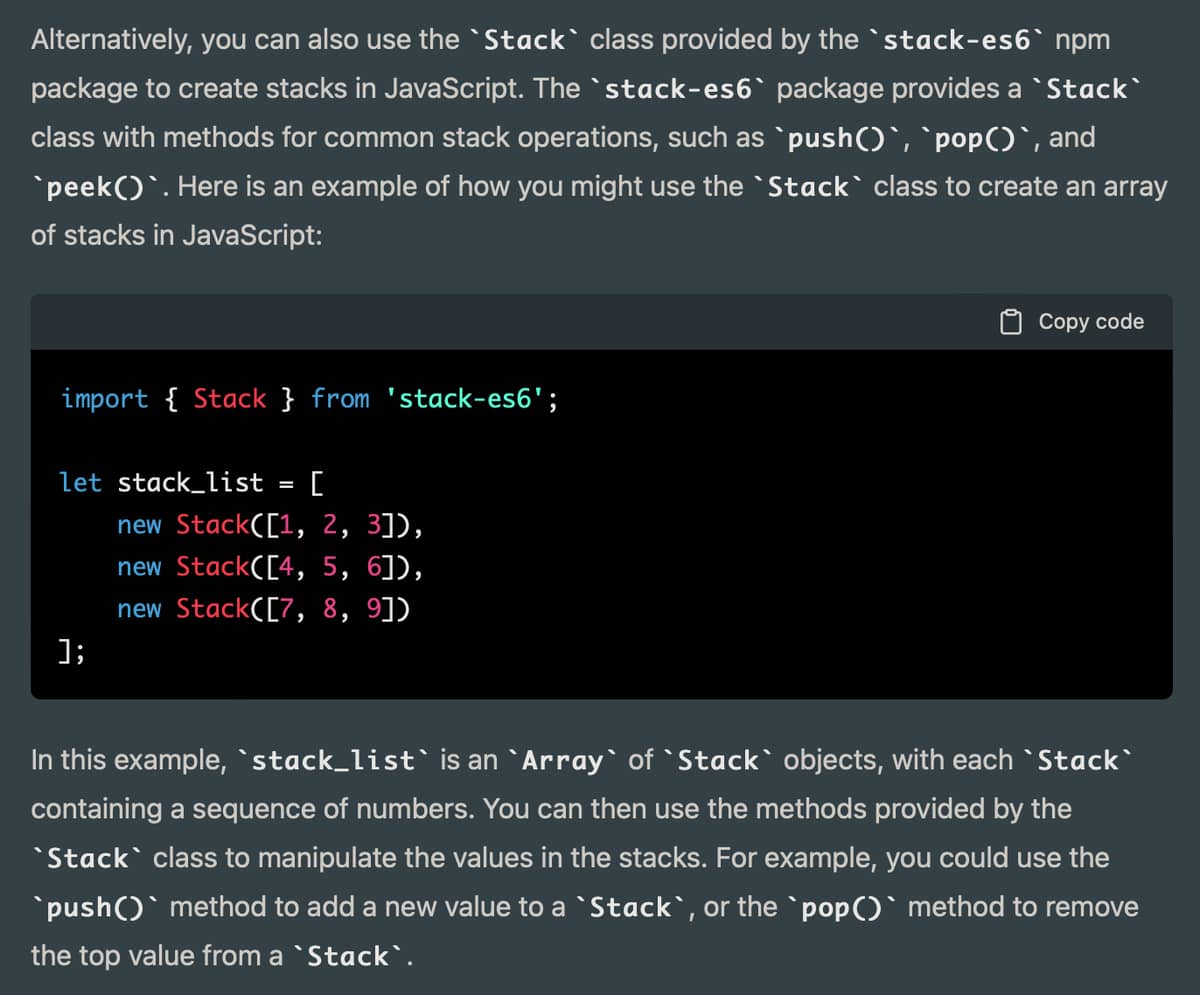

Javascript devs, on the other hand… I’m having this dialog to see if ChatGPT can do pair programming with me. I’m seeing people saying it works for them.

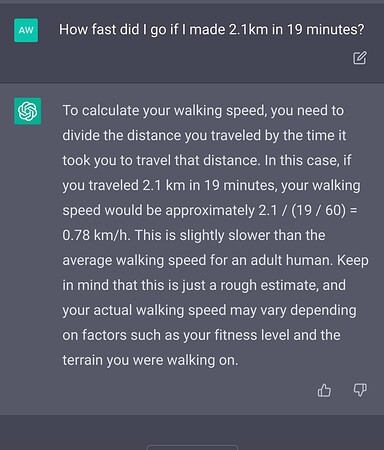

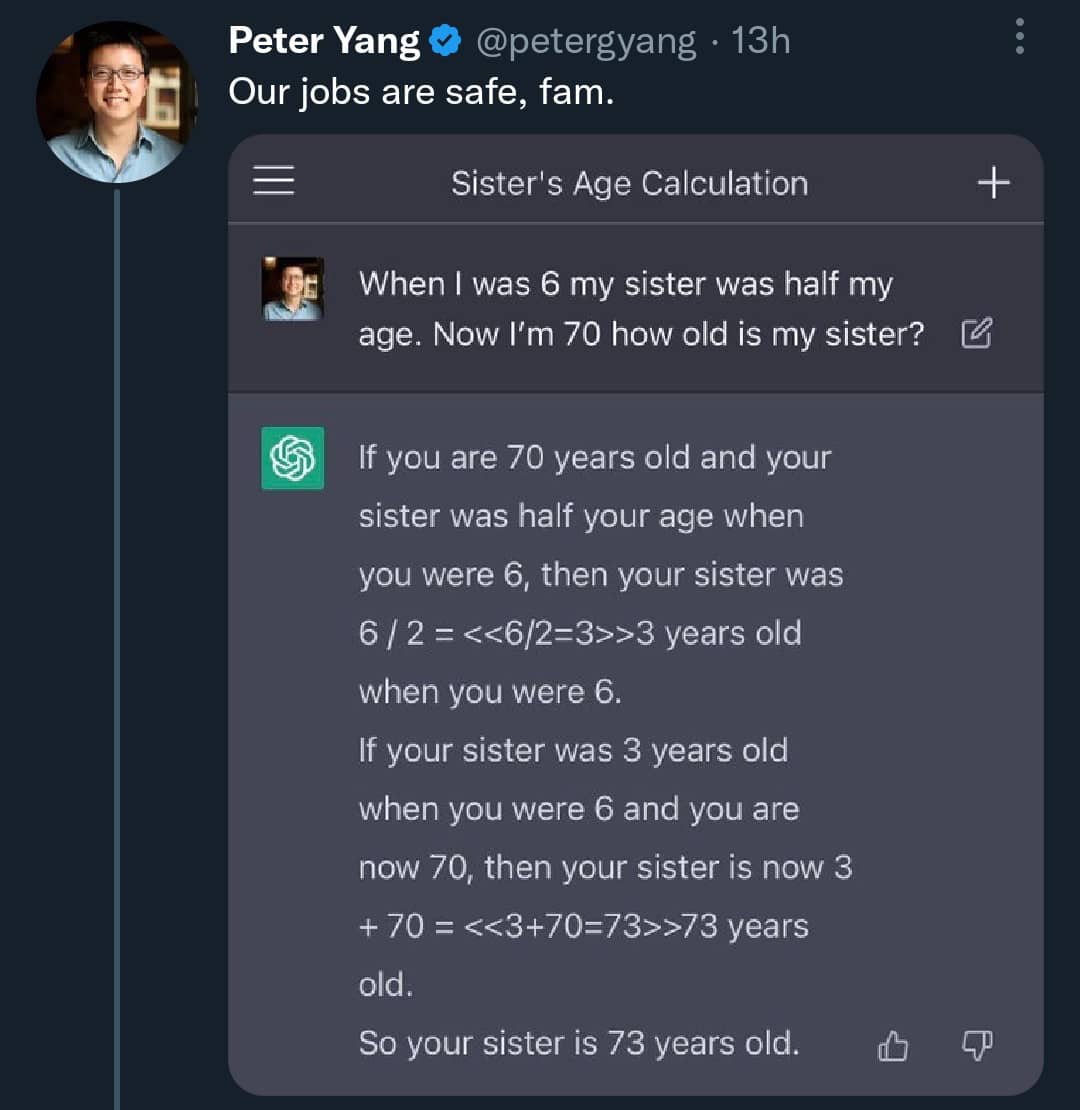

I saw this the other day:

The blog post:

https://thetinycto.com/blog/writing-a-game-using-chatgpt

The game:

It’s going to change how we develop programs/softwares. This generation in Javascript of a Tetris game is great : GitHub - aadnk/TetrisChatGPT: A Tetris game in JavaScript made almost entirely by ChatGPL . But it needed some corrections so we need to be able to analyze the code and to able to check if it produces the right code. Tests are going to be more important to make sure the code that has been generated works. And I am sure in the future that when tests fail AI will suggest how to correct the code or autocorrect it to pass all the tests. So I think the future to develop applications will be to write specifications and write the tests. And Teaching will be “How to write full specifications and how to to write tests to cover all the cases” . Specifications will become like a flexible DSL that can generate code in different languages and could generate it in the most appropriate programming language in function of the requirements (speed, real-time, …) and teaching in programming will be more linked to specific domains (Finance, Logistics, Medical, …) since you need to understand the domain to write specifications.

I am not sure it will really be that big of a deal, as writing the specifications and the tests well enough is as hard and time consuming as just writing the code. Software that are supposed to write the code from high level specification have been around for decades without much traction because in the end you have to check and debug the generated code anyway.

If you check the example I gave for Tetris, with a few sentences in plain English (not in a DSL language) it can generate a large block of code with quite complex logic AND modify it. For example, a freelance developer needs anyway these specifications from clients so it will allow quick iterations of programs. If clients change their minds/ add/change specifications, ChatGPT can modify the program/system in seconds instead of having the developer spending hours to modify it. The developer will have to write tests and analyze code before delivering the final product. So I think it’s going to be a BIG change in the app/software development for the future. We will see.

ChatGPT can only make juniors or average programmers obsolete because it generates certain well-known pieces and blocks of code, aka boilerplate.

Even when I was working with Java a lifetime ago I kept wondering why do I have to write these 20 classes by hand when they can be generated rather easily. Eventually of course the community added such generators.

As a senior my job is only 5-10% writing code. Most of the time I am gathering business requirements, documenting, enriching tests (either by increasing coverage, or adding fixtures, or making realistic mocks). When you actually get to the part where you write business logic code, you’re expected to produce it quickly and it must adhere to a number of good practices. That’s why you’re a senior – writing the code is not a challenge to you.

When it comes to generating code, that’s actually a very easy task. But try writing a generator that must parse your DB schema and Ecto models and move a field from one table to another.

I’d be hugely impressed by a tool that can do such activities. Just burping out a bunch of code with predetermined specifications is not a feat.

Now recognizing the human language and linking it to the underlying piece of info is a good technical achievement in itself – but it doesn’t at all mean the tool “understands” code.

Yes, that I was thinking, juniors and average programmers are going to become obsolete, they will have to work in a different way. I am an average programmer, I work in IT, I just program when I need it some scripts/small programs to convert data/ transfer data between systems. I used also to develop some internal apps. I think in my case ChatGPT could generate the code that I need and could have even produced better PHP code that I wrote when I was learning PHP.

I also think chatGPT is much more much powerful than generators, you don’t need to learn “generators” / commands and a specific format, just give your specifications in English or even in your own language and it produces large block of codes. When new libraries are released, it will be trained on these libraries and it will produce code using these libraries. Generators have to be maintained/updated …

To be fair this smells like the X Y problem. We had a case at the company I worked at that the clients required a lot of features and changes in short period of times, so we decided that instead creating/using a templating engine/DSL, to write the custom business requirements as simple code. This was one of the smartest decisions, because we were able to implement those features painlessly and with tests compared to the overhead provided by using custom DSL’s or templates, also we never required any seniors, or even middle developers to implement those kind of features, because the core functionality like scalability was written by some seasoned developers that knew what they were doing. The fun thing about this is that I talked with a lot of developers (some of them had 10+ years of experience) and they said that this is an abomination, without any arguments following this…

Exactly this, or that it can generate maintainable code. Even in the most impressive demos I’ve seen, it is still a human doing the modeling, naming things, etc. Google has been able to spit out a pretty decent code example based on a prompt for a long time. This is an impressive advance on that kind of tool to be sure, but it’s a long way from making anybody obsolete in my opinion. The main reason to hire junior developers to work under a senior’s direction is so that they can replace the senior eventually…what it will do is further reduce the value of the parts of programming that can be easily automated, increasing the productivity of the average junior and senior developer. Of course, that will mean fewer people will be needed to develop an application of any given complexity, just as it requires fewer now than it did 30 years ago.

Yep. The truth no businessman wants to hear is that the tools for replacing programmers are almost as far away in the future as a general AI is. And they don’t want to hear it because they have that eternal pipe dream that they will replace all their high-paid employees with machines to which they only have to pay the power and internet bill.

Let them dream. Dreaming is free.

In the meantime more and more seasoned people are giving up on programming, leaving me and many other professionals plenty of room to contract for insane prices.

Code generators are fine. They can either replace less experienced devs or, which is FAR more likely, simply make their work easier – if the employer doesn’t catch up to the fact that they can be partially replaced by the generators (which is a beautiful theory that rarely aligns with reality).

But in the end business requirements always change and products must always evolve. Code generators do exactly nothing to accommodate for that. That whole area is stuck and has been stuck for decades – and Elixir is no exception by the way; run mix phx.gen.auth and from then on you are left on your own devices to evolve the resulting code should your security requirements change (and they almost always do at one point).

Truth is, we have absolutely nothing even remotely intelligent when it comes to reading existing projects’ code and changing it meaningfully without a lot of human supervision.

It tried to paste one if the questions for advent of code just for a fun. That chat-thingy actually wrote code that would solve the puzzle, which I thought was pretty amazing.

Code was horrible and even worse than what I could have come up with in my junior days.

My biggest worry for the future is that I might have to maintain/patch AI generated abominations like tbat ![]()

I don’t think this is a problem because the generated code is not what is going to be maintained. If you have to update the requirements or change them, you just update the specifications. The most important is that that your app/program works and that all your tests pass. And if some tests fail, the AI will autocorrect or generate a new code that pass all your tests. Right now to make sure the code works, you need to be close to some pseudo code in English.

@dimitarvp said “As a senior developer, you’re expected to produce the code quickly”. It’s true in a programming language you know very well, but if you have to code in another language you don’t know as well, it’s a lot of work. And migrating an app from a programming language to another language an app/program in another programming language, it’s a very long task. With AI / ChatGPT from the same specifications you can have the code in another programming language.

If we do a really quick overview of history for levels of programming languages, Assembly then C then Python, I really think ChatGPT is the next level.

If it were only that easy, we wouldn’t have needed programmers for like 20 years now.

There are always some minor details the generators can’t capture. Even if you generate a web app (Elixir with Phoenix and Golang with Gorilla/Fiber have solid generators) you still have to go to the generated code and e.g. disallow certain symbols in emails when validating sign up and sign in requests – I had customers who didn’t want people to use + in email addresses. Other people want DB timeout to be not 5s but 15s. Others want to disable CSRF protection for a domain that only they trust.

There are thousands of these pesky little details.

A code generator cannot ever hope to capture them all because even if it does you’d end up writing specs that are 50x bigger than the code – so the value proposition is lost and you might as well just write the code instead.

So sadly, 99% of the time, code generators are a one time affair. It only saves you time making the first variant of the code. From then on it’s entirely on you to maintain and extend it.

To be fair, what we’re discussing is a bit more than a generator. Several of the demos I have seen are making minor changes to somewhat complex code just as in your example. But in the demos the programmer has already decided where the intervention should happen, it just instructs the bot to handle the actual changes. And as @kwando has mentioned, I think the specific way the bot changes the code is generally suboptimal and must be changed from there. It is certainly impressive that it is able to solve the problem at all to be sure, but for a small change it seems like more time, if not exactly more “work”, to involve the bot.

Now, it might be possible to give the bot an entire application and ask for a similar change, either now or in the future, but I shudder to think of the solution. As long as the bot can keep making required changes, well I guess you have something “maintainable,” but the minute it couldn’t you’d potentially have a disaster on your hands. Many of the worst bugs are unforeseen side effect of “successful” changes to behavior that only become evident after deployment. If the bot chooses that moment to fail, and then you’ve got to crawl into a mess of AI generated code to try and fix a problem that might be causing massive problems already, it would be a nightmare. It seems rather absurd to reason that well humans are notoriously bad at building/maintaining software, so let’s hurry and replace them with this amazing software that some humans built and maintain.

I am not the sort of AI skeptic who considers the whole endeavor to be a waste of time. I think we are seeing the development of potentially massively helpful tools. But I am very wary of anyone who proclaims an imminent panacea for all our problems, especially when they stand to benefit financially from persuading people of that claim. Most of our problems are human ones, and the attempt to solve them all with one fell technological swoop is bound to make things worse. So I very much hope decision makers don’t make the mistake of placing the entire dev process into the hands of bots thinking it’s gonna be so much faster/cheaper etc, or much much worse, apply the same logic in other even less pleasant business…

That’s the crux of it IMO. I am not an “AI skeptic”, I am a “capitalism skeptic”. When people stand to gain money from something they’ll dance on their forehead and call the yellow color blue just so you give them your money (and time, and attention) – this is a well-known and quite an old fact. Hence I started getting the grumpy old guy behaviour and I am like “show me a CLI tool to whom I can say ‘remove field X from record Y’ or ‘add integer range validation for field Z’ or ‘make this GraphQL field non-null’ or just GTFO”.

I do hope we’re headed to more intelligent tools. I am not married to commercial programming, in fact I hate it with a passion sometimes, and I’d love to be able to have tools that can read and “understand” and modify code. But right now it seems like a bunch of 16-year olds that get hyped easily are trying to, you know, hype something up.

Back on topic, I perceive ChatGPT as a replacement for Google search when it comes to coding questions. That’s how it struck me initially and after I tried it a few times and read about it, my impression remained the same. Hopefully it can be used to improve search in general because that’s still a problem that’s not well-solved to this day, and it’s still something that people need 24/7.

I’m sure google worked better before the SEO industry came along, and it seems to get worse year by year. Unfortunately it is still a lot better than bing for answering programming questions. I feel that ChatGPT will need to be pay for use rather than ad-driven to prevent its results being corrupted over time.

I have to say, ChatGPT is pretty amazing for a range of activities. I have a small training course project where I’m the SME (supposedly) - ChatGPT has done a pretty good (not great) job of turning bullet points into a video script and certainly provided me with a valuable head start. Given that it’s early days and should only get better, there are many white collar workers that are going to have to think hard about “adding value” rather than doing repetitive drudge work.

If I was to be asked for career advice, something like electrician, farmer, stonemason or artisan baker will likely have more longevity than an ad copywriter. Or whatever roles are required to keep folks who are immersed in the metaverse alive and not too smelly.

Writing a good test is an order of magnitude harder than writing actual code implementing something. Ask me how I know.

Test quality is the best way to figure out seniour from a juniour engineer. In the latter case, if there are tests at all, they are likely trivial “sequence of actions” checking expected outcomes. On the contrary, experienced developers go all the way to property-based tests, modeling (down to temporal models sometimes).

Like any other automation, ChatGPT will someday automate routine tasks. Those that should not even need to exist - aforementioned “boilerplate code” is one of them.

Yet I have one observation I’m interested in sharing. A properly designed language + framework should not need any of the boilerplate. But human beings write systems in an incremental way, because that’s how nature built us (by randomly generating gene sequences and then evicting those not fitting the changing environment). That’s why we start with a clean and nice C and eventually get to C++ 20 abomination ![]() What I am wondering about, would any model (not necessarily GPT) get to a state where we can use it to safely refactor an existing system, with the goal of simplification, cleanup and removal of deprecated stuff? It should be possible to use original implementation to test against, so there is no problem to write tests against.

What I am wondering about, would any model (not necessarily GPT) get to a state where we can use it to safely refactor an existing system, with the goal of simplification, cleanup and removal of deprecated stuff? It should be possible to use original implementation to test against, so there is no problem to write tests against.