Hello everyone,

First post here and I am hoping someone can help expand my knowledge as I am clearly not handling a situation we are having properly.

We are using ExCrypto which directs to the underlying :crypto.block_encrypt/decrypt.

Our Ecto schema looks something like this:

schema "bank" do

field :uuid, :binary

field :iv, :binary

field :tag, :binary

end

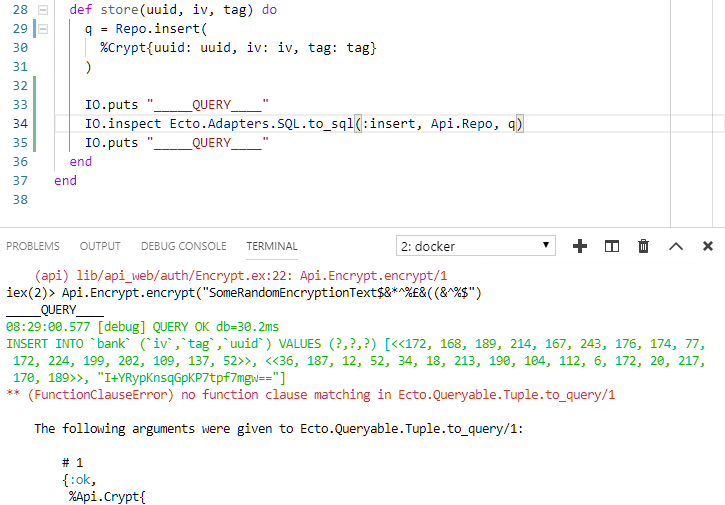

Part of the logic calls to ExCrypto.rand_bytes which returns a random binary stream.

An example here is if I attempt to insert it using Repo I will receive an expected struct that allows me to decrypt some cipher text.

%Api.Bank{

__meta__: #Ecto.Schema.Metadata<:loaded, "bank">,

id: 2,

iv: <<155, 6, 136, 177, 177, 49, 143, 88, 129, 95, 129, 232, 197, 222, 16,

200>>,

tag: <<76, 5, 248, 99, 14, 234, 17, 201, 220, 233, 231, 86, 228, 193, 77,

102>>,

uuid: "a2eQg6kJiQ82CdpQD5SjBA=="

}

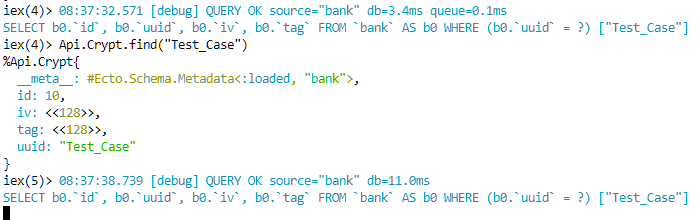

By requerying the database with that particular uuid (this would be a random string) I seem to get a completely different output.

%Api.Crypt{

__meta__: #Ecto.Schema.Metadata<:loaded, "bank">,

id: 2,

iv: <<63, 6, 63, 63, 63, 49, 63, 88, 63, 95, 63, 63, 63, 63, 16>>,

tag: <<76, 5, 63, 99, 14, 63, 17, 63, 63, 63, 63, 86, 63, 63, 77, 102>>,

uuid: "a2eQg6kJiQ82CdpQD5SjBA=="

}

This tells me that the data being passed over to Repo changed somewhere between that call and it going into the database.

Working from this dataset in memory is fine, using ExCrypto.encrypt & ExCrypto.decrypt but as soon as I persist it to the database and retrieve it its garbled.

The reason its being persisted is we are using a backend RabbitMQ queue which picks up something we are doing at a later date.

My question, is am I not understanding how binaries work in Elixir or is something happening to my raw binary down the line when Repo inserts it?

Base.encode64 and Base.decode64 works fine, nothing is lost to and fro the database which makes me question how is the binary stream being handled here.

We use a MySQL database (5.6), InnoDB, utf8mb4_0900_ai_ci using BLOB columns.

Help is much appreciated,

Many thanks

!

!